Simulation Study 1

Model 1 Results

R. Noah Padgett

2022-01-10

Last updated: 2022-03-08

Checks: 4 2

Knit directory: Padgett-Dissertation/

This reproducible R Markdown analysis was created with workflowr (version 1.6.2). The Checks tab describes the reproducibility checks that were applied when the results were created. The Past versions tab lists the development history.

Great job! The global environment was empty. Objects defined in the global environment can affect the analysis in your R Markdown file in unknown ways. For reproduciblity it’s best to always run the code in an empty environment.

The command set.seed(20210401) was run prior to running the code in the R Markdown file. Setting a seed ensures that any results that rely on randomness, e.g. subsampling or permutations, are reproducible.

Great job! Recording the operating system, R version, and package versions is critical for reproducibility.

- model1

- study1-model1-ppd

To ensure reproducibility of the results, delete the cache directory study1_model1_results_cache and re-run the analysis. To have workflowr automatically delete the cache directory prior to building the file, set delete_cache = TRUE when running wflow_build() or wflow_publish().

Great job! Using relative paths to the files within your workflowr project makes it easier to run your code on other machines.

Tracking code development and connecting the code version to the results is critical for reproducibility. To start using Git, open the Terminal and type git init in your project directory.

This project is not being versioned with Git. To obtain the full reproducibility benefits of using workflowr, please see ?wflow_start.

# Load packages & utility functions

source("code/load_packages.R")

source("code/load_utility_functions.R")

# environment options

options(scipen = 999, digits=3)

# get code to simulate data

source("code/study_1/study_1_generate_data.R")Simulated Data

# data parameters

paravec <- c(

N = 500

, J = 5 # N_items

, C = 3 # N_cat

, etaCor = .23

, etasd1 = 1

, etasd2 = sqrt(0.1)

, lambda=0.7

, nu=1.5

, sigma.ei=0.25

, rho1=0.1

)

# simulated then saved below

sim_tau <- matrix(

c(-0.822, -0.751, -0.616, -0.392, -0.865,

0.780, 0.882, 0.827, 1.030, 0.877),

ncol=2, nrow=5

)

# Use parameters to simulate data

sim.data <- simulate_data_misclass(paravec, tau=sim_tau)Describing the Observed (simulated) Data

d1 <- sim.data$Ysampled %>%

as.data.frame() %>%

select(contains("y")) %>%

mutate(id = 1:n()) %>%

pivot_longer(

cols = contains("y"),

names_to = c("item"),

values_to = "Response"

) %>%

mutate(item = ifelse(nchar(item) > 2, substr(item, 2, 3), substr(item, 2, 2)))

d2 <- sim.data$logt %>%

as.data.frame() %>%

select(contains("logt")) %>%

mutate(id = 1:n()) %>%

pivot_longer(

cols = contains("logt"),

names_to = c("item"),

values_to = "Time"

) %>%

mutate(item = ifelse(nchar(item) > 5, substr(item, 5, 6), substr(item, 5, 5)))

dat <- left_join(d1, d2)Joining, by = c("id", "item")dat_sum <- dat %>%

select(item, Response, Time) %>%

group_by(item) %>%

summarize(

p1 = table(Response)[1] / n(),

p2 = table(Response)[2] / n(),

p3 = table(Response)[3] / n(),

M1 = mean(Response, na.rm = T),

Mt = mean(Time, na.rm = T),

SDt = sd(Time, na.rm = T)

)

colnames(dat_sum) <-

c(

"Item",

"Prop. R == 1",

"Prop. R == 2",

"Prop. R == 3",

"Mean Response",

"Mean Response Time",

"SD Response Time"

)

dat_sum$Item <- paste0("item_", 1:N_items)

kable(dat_sum, format = "html", digits = 3) %>%

kable_styling(full_width = T)| Item | Prop. R == 1 | Prop. R == 2 | Prop. R == 3 | Mean Response | Mean Response Time | SD Response Time |

|---|---|---|---|---|---|---|

| item_1 | 0.308 | 0.404 | 0.288 | 1.98 | 1.39 | 0.597 |

| item_2 | 0.310 | 0.414 | 0.276 | 1.97 | 1.43 | 0.618 |

| item_3 | 0.338 | 0.386 | 0.276 | 1.94 | 1.43 | 0.613 |

| item_4 | 0.362 | 0.384 | 0.254 | 1.89 | 1.40 | 0.592 |

| item_5 | 0.292 | 0.422 | 0.286 | 1.99 | 1.36 | 0.582 |

# covariance among items

cov(sim.data$Ysampled) y1 y2 y3 y4 y5

y1 0.5968 0.0634 0.0428 0.0640 0.0319

y2 0.0634 0.5860 0.0440 0.0364 0.0258

y3 0.0428 0.0440 0.6114 0.0394 0.0457

y4 0.0640 0.0364 0.0394 0.6055 0.0655

y5 0.0319 0.0258 0.0457 0.0655 0.5791# correlation matrix

psych::polychoric(sim.data$Ysampled)Call: psych::polychoric(x = sim.data$Ysampled)

Polychoric correlations

y1 y2 y3 y4 y5

y1 1.00

y2 0.14 1.00

y3 0.09 0.09 1.00

y4 0.13 0.08 0.08 1.00

y5 0.07 0.05 0.09 0.14 1.00

with tau of

1 2

y1 -0.50 0.56

y2 -0.50 0.59

y3 -0.42 0.59

y4 -0.35 0.66

y5 -0.55 0.57Model 1: Traditional IFA

Model details

cat(read_file(paste0(w.d, "/code/study_1/model_1.txt")))model {

### Model

for(p in 1:N){

for(i in 1:nit){

# data model

y[p,i] ~ dcat(pi[p,i, ])

# LRV

ystar[p,i] ~ dnorm(lambda[i]*eta[p], 1)

# Pr(nu = 3)

pi[p,i,3] = phi(ystar[p,i] - tau[i,2])

# Pr(nu = 2)

pi[p,i,2] = phi(ystar[p,i] - tau[i,1]) - phi(ystar[p,i] - tau[i,2])

# Pr(nu = 1)

pi[p,i,1] = 1 - phi(ystar[p,i] - tau[i,1])

}

}

### Priors

# person parameters

for(p in 1:N){

eta[p] ~ dnorm(0, 1) # latent ability

}

for(i in 1:nit){

# Thresholds

tau[i, 1] ~ dnorm(0.0,0.1)

tau[i, 2] ~ dnorm(0, 0.1)T(tau[i, 1],)

# loadings

lambda[i] ~ dnorm(0, .44)T(0,)

# LRV total variance

# total variance = residual variance + fact. Var.

theta[i] = 1 + pow(lambda[i],2)

# standardized loading

lambda.std[i] = lambda[i]/pow(theta[i],0.5)

}

# compute omega

lambda_sum[1] = lambda[1]

for(i in 2:nit){

#lambda_sum (sum factor loadings)

lambda_sum[i] = lambda_sum[i-1]+lambda[i]

}

reli.omega = (pow(lambda_sum[nit],2))/(pow(lambda_sum[nit],2)+nit)

}Model results

# Save parameters

jags.params <- c("tau", "lambda", "theta", "reli.omega", "lambda.std")

# initial-values

jags.inits <- function(){

list(

"tau"=matrix(c(-0.822, -0.751, -0.616, -0.392, -0.865,

0.780, 0.882, 0.827, 1.030, 0.877),

ncol=2, nrow=5),

"lambda"=rep(0.7,5),

"eta"=sim.data$eta[,1,drop=T],

"ystar"=t(sim.data$ystar)

)

}

# data

mydata <- list(y = sim.data$Ysampled,

N = nrow(sim.data$Ysampled),

nit = ncol(sim.data$Ysampled))

model.fit <- R2jags::jags(

model = paste0(w.d, "/code/study_1/model_1.txt"),

parameters.to.save = jags.params,

inits = jags.inits,

data = mydata,

n.chains = 4,

n.burnin = 5000,

n.iter = 10000

)module glm loadedCompiling model graph

Resolving undeclared variables

Allocating nodes

Graph information:

Observed stochastic nodes: 2500

Unobserved stochastic nodes: 3015

Total graph size: 25550

Initializing modelprint(model.fit, width=1000)Inference for Bugs model at "C:/Users/noahp/Documents/GitHub/Padgett-Dissertation/code/study_1/model_1.txt", fit using jags,

4 chains, each with 10000 iterations (first 5000 discarded), n.thin = 5

n.sims = 4000 iterations saved

mu.vect sd.vect 2.5% 25% 50% 75% 97.5% Rhat n.eff

lambda[1] 0.573 0.234 0.213 0.420 0.542 0.690 1.108 1.02 500

lambda[2] 0.422 0.172 0.121 0.303 0.408 0.522 0.803 1.00 760

lambda[3] 0.389 0.166 0.097 0.277 0.377 0.482 0.767 1.01 400

lambda[4] 0.553 0.217 0.209 0.408 0.528 0.667 1.021 1.01 360

lambda[5] 0.389 0.160 0.106 0.277 0.379 0.487 0.733 1.00 750

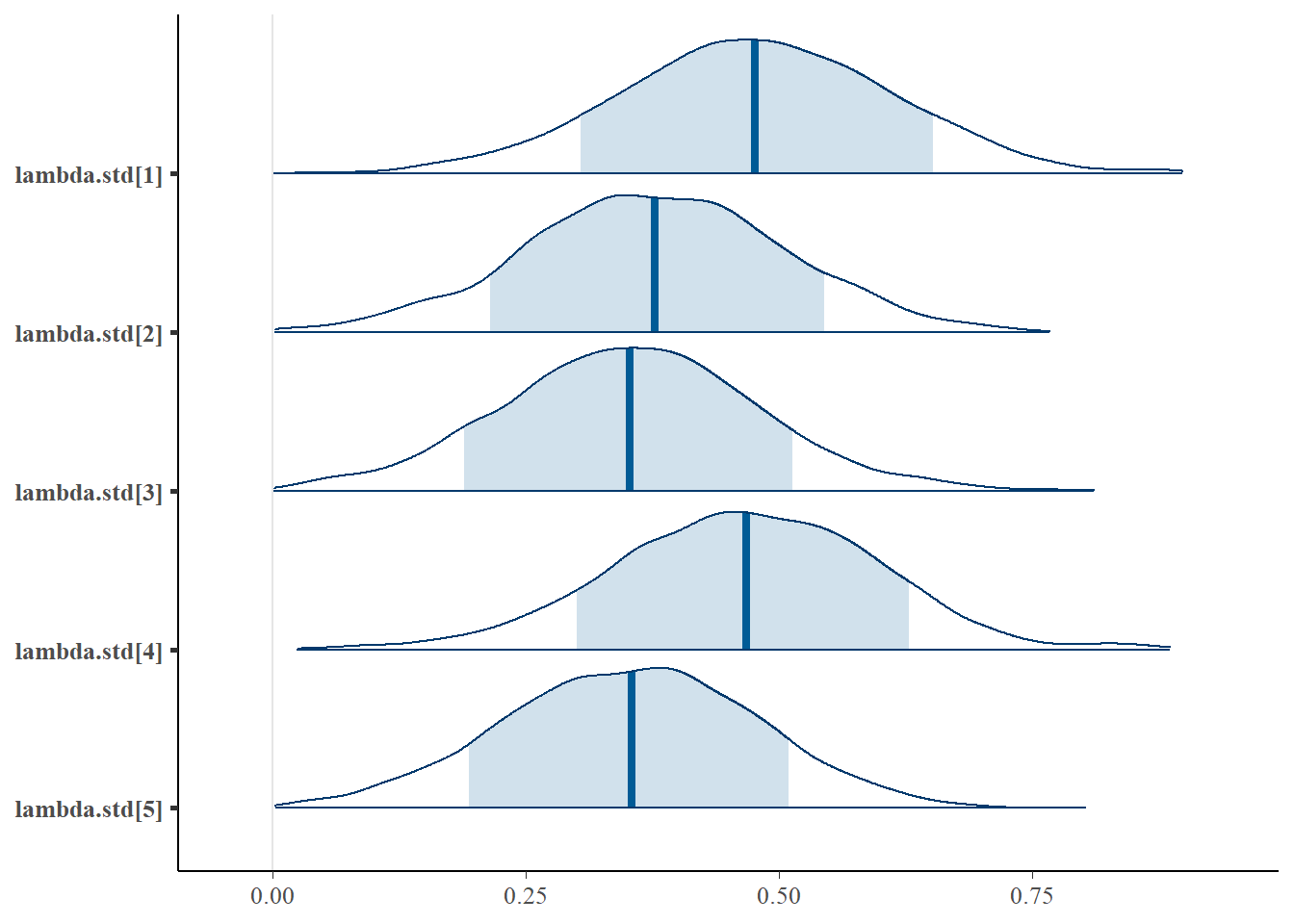

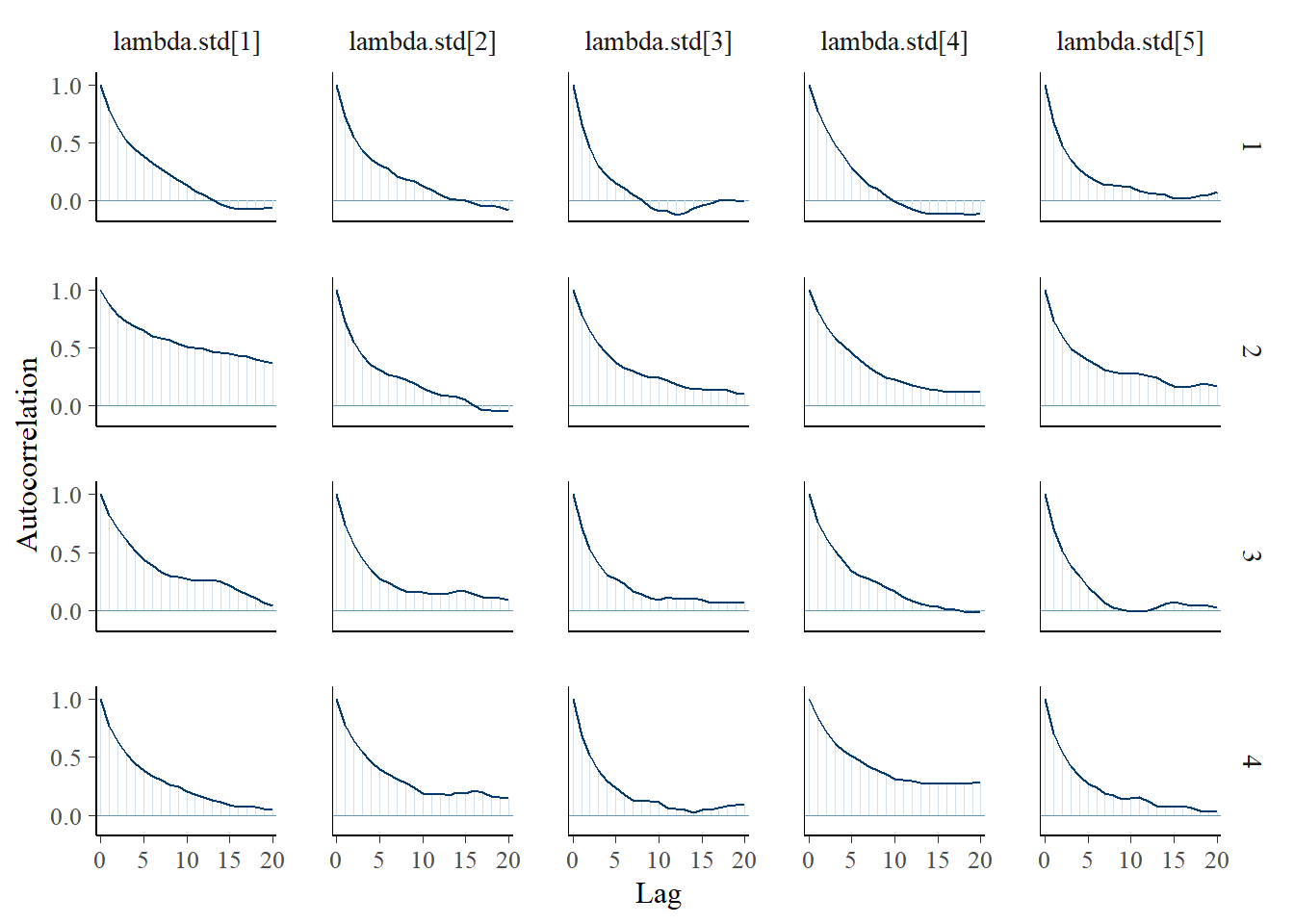

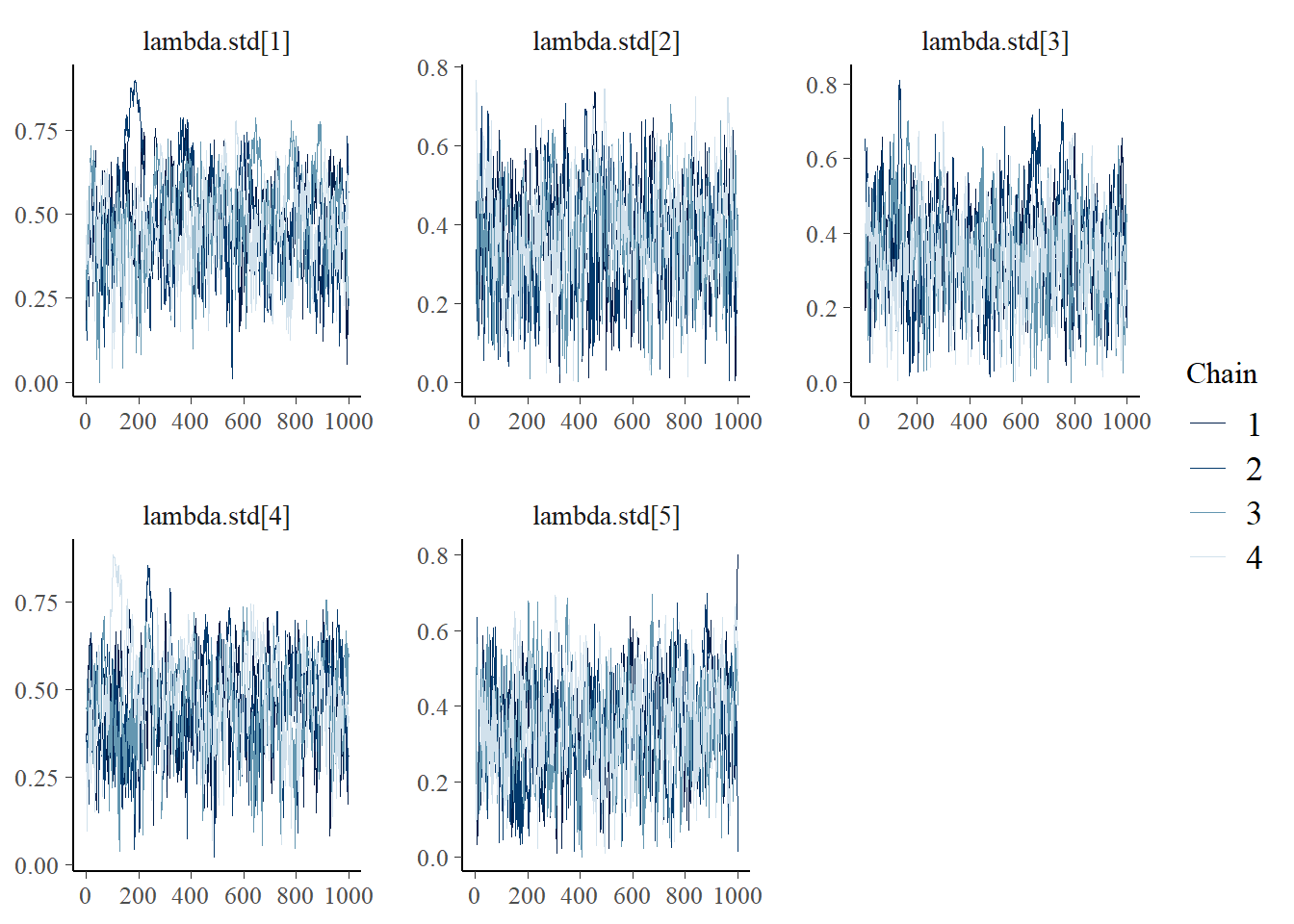

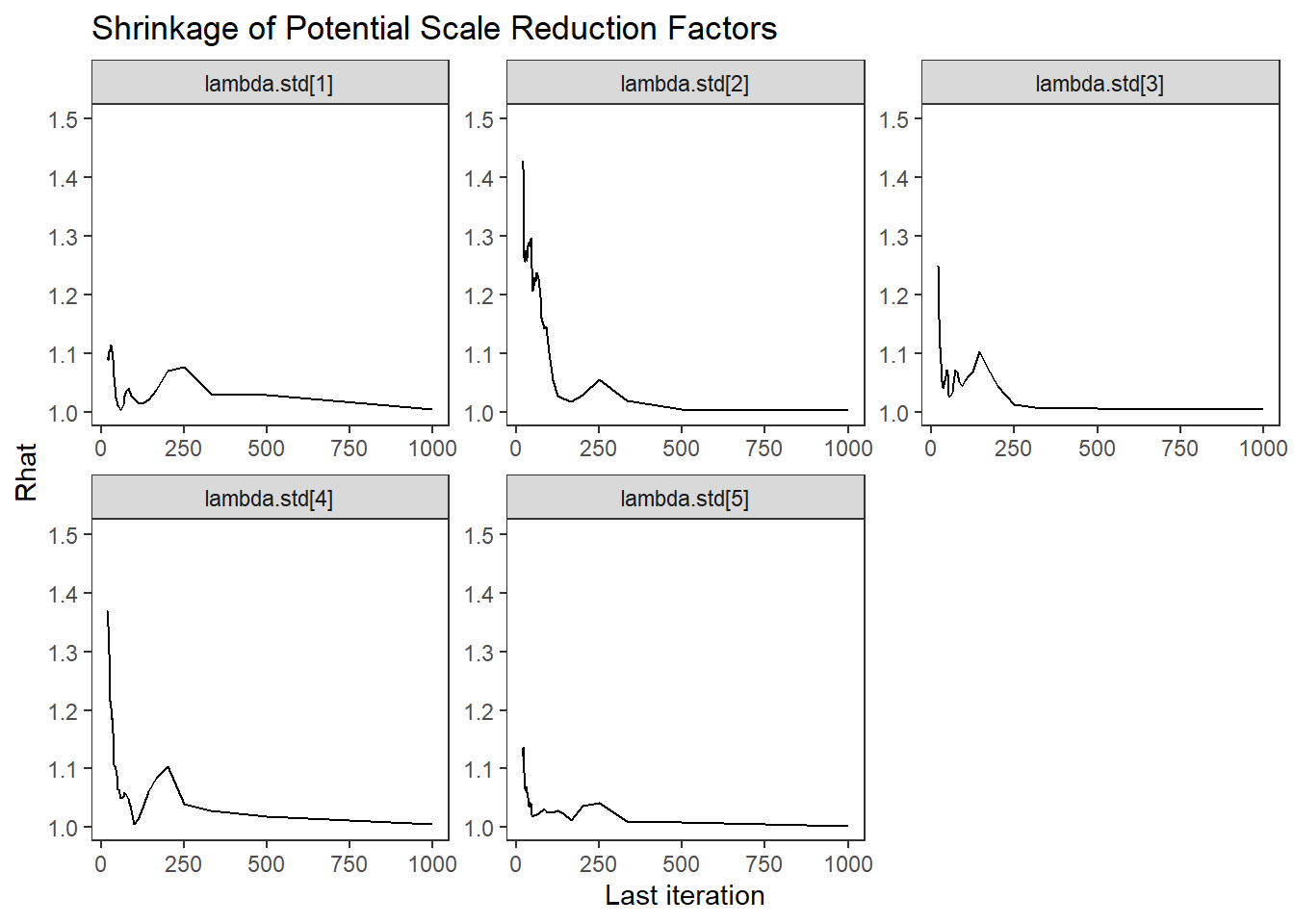

lambda.std[1] 0.477 0.135 0.208 0.387 0.476 0.568 0.742 1.01 580

lambda.std[2] 0.377 0.129 0.120 0.290 0.377 0.463 0.626 1.00 860

lambda.std[3] 0.352 0.127 0.097 0.267 0.353 0.434 0.609 1.02 410

lambda.std[4] 0.466 0.131 0.205 0.378 0.467 0.555 0.714 1.01 480

lambda.std[5] 0.352 0.124 0.105 0.267 0.354 0.438 0.591 1.00 760

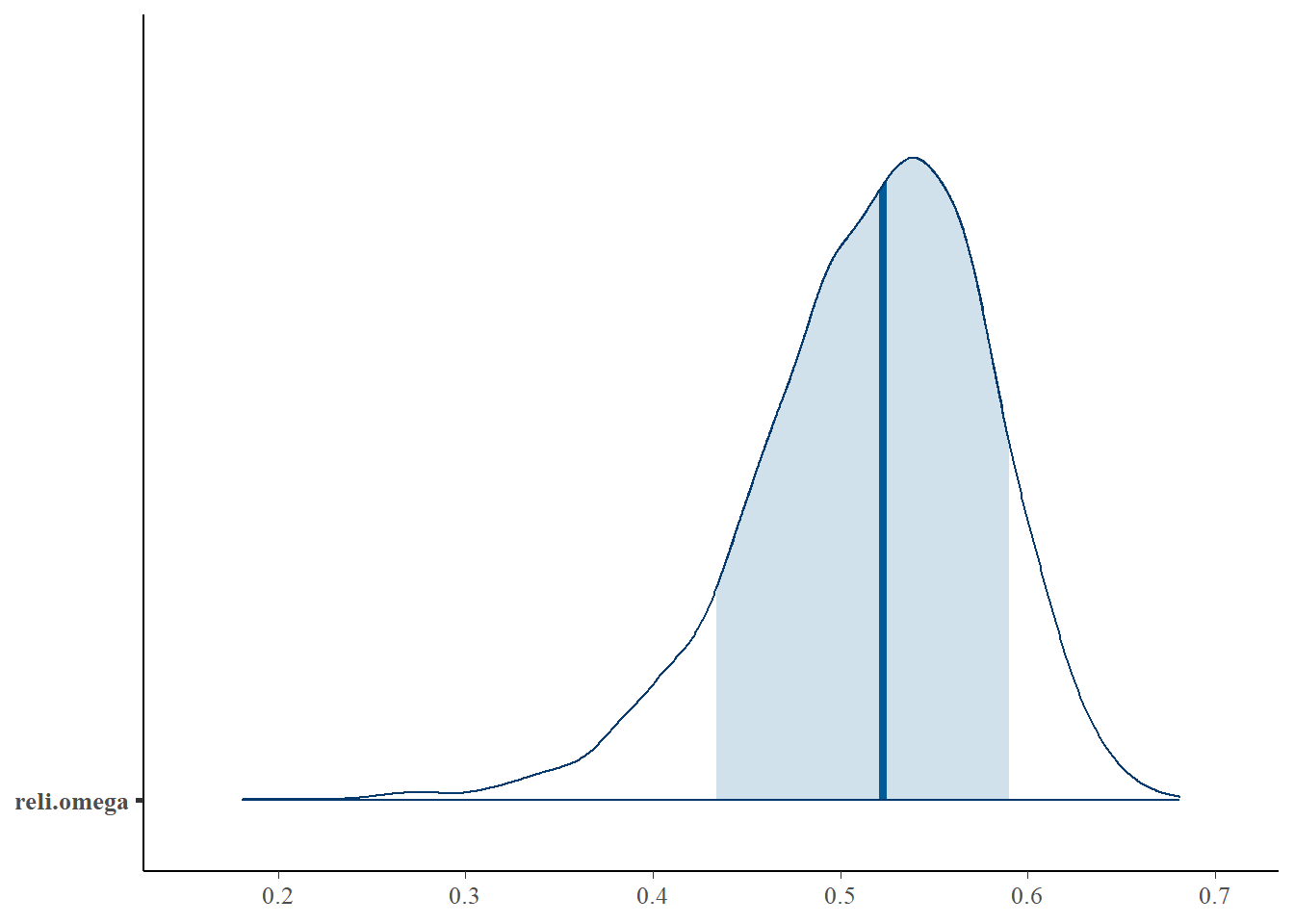

reli.omega 0.516 0.063 0.377 0.478 0.523 0.561 0.620 1.01 500

tau[1,1] -0.771 0.102 -0.978 -0.834 -0.767 -0.702 -0.584 1.01 1100

tau[2,1] -0.735 0.091 -0.918 -0.797 -0.732 -0.673 -0.562 1.00 2500

tau[3,1] -0.618 0.088 -0.798 -0.677 -0.614 -0.559 -0.448 1.00 3900

tau[4,1] -0.540 0.095 -0.732 -0.597 -0.538 -0.479 -0.362 1.01 1000

tau[5,1] -0.808 0.091 -0.983 -0.871 -0.806 -0.746 -0.631 1.00 1300

tau[1,2] 0.861 0.109 0.669 0.789 0.855 0.926 1.094 1.01 750

tau[2,2] 0.884 0.095 0.700 0.821 0.883 0.946 1.078 1.00 850

tau[3,2] 0.879 0.092 0.706 0.816 0.877 0.938 1.063 1.00 1100

tau[4,2] 1.011 0.108 0.815 0.940 1.006 1.075 1.238 1.00 1400

tau[5,2] 0.832 0.090 0.661 0.772 0.831 0.890 1.013 1.00 4000

theta[1] 1.383 0.365 1.045 1.177 1.293 1.476 2.228 1.06 200

theta[2] 1.208 0.168 1.015 1.092 1.166 1.273 1.645 1.01 470

theta[3] 1.179 0.157 1.009 1.077 1.142 1.232 1.589 1.03 250

theta[4] 1.353 0.317 1.044 1.167 1.279 1.445 2.042 1.04 180

theta[5] 1.177 0.143 1.011 1.077 1.144 1.237 1.537 1.00 830

deviance 3947.897 68.595 3811.240 3902.434 3948.085 3993.949 4080.362 1.00 760

For each parameter, n.eff is a crude measure of effective sample size,

and Rhat is the potential scale reduction factor (at convergence, Rhat=1).

DIC info (using the rule, pD = var(deviance)/2)

pD = 2345.3 and DIC = 6293.2

DIC is an estimate of expected predictive error (lower deviance is better).Posterior Distribution Summary

# extract for plotting

jags.mcmc <- as.mcmc(model.fit)

a <- colnames(as.data.frame(jags.mcmc[[1]]))

fit.mcmc <- data.frame(as.matrix(jags.mcmc, chains = T, iters = T))

colnames(fit.mcmc) <- c("chain", "iter", a)

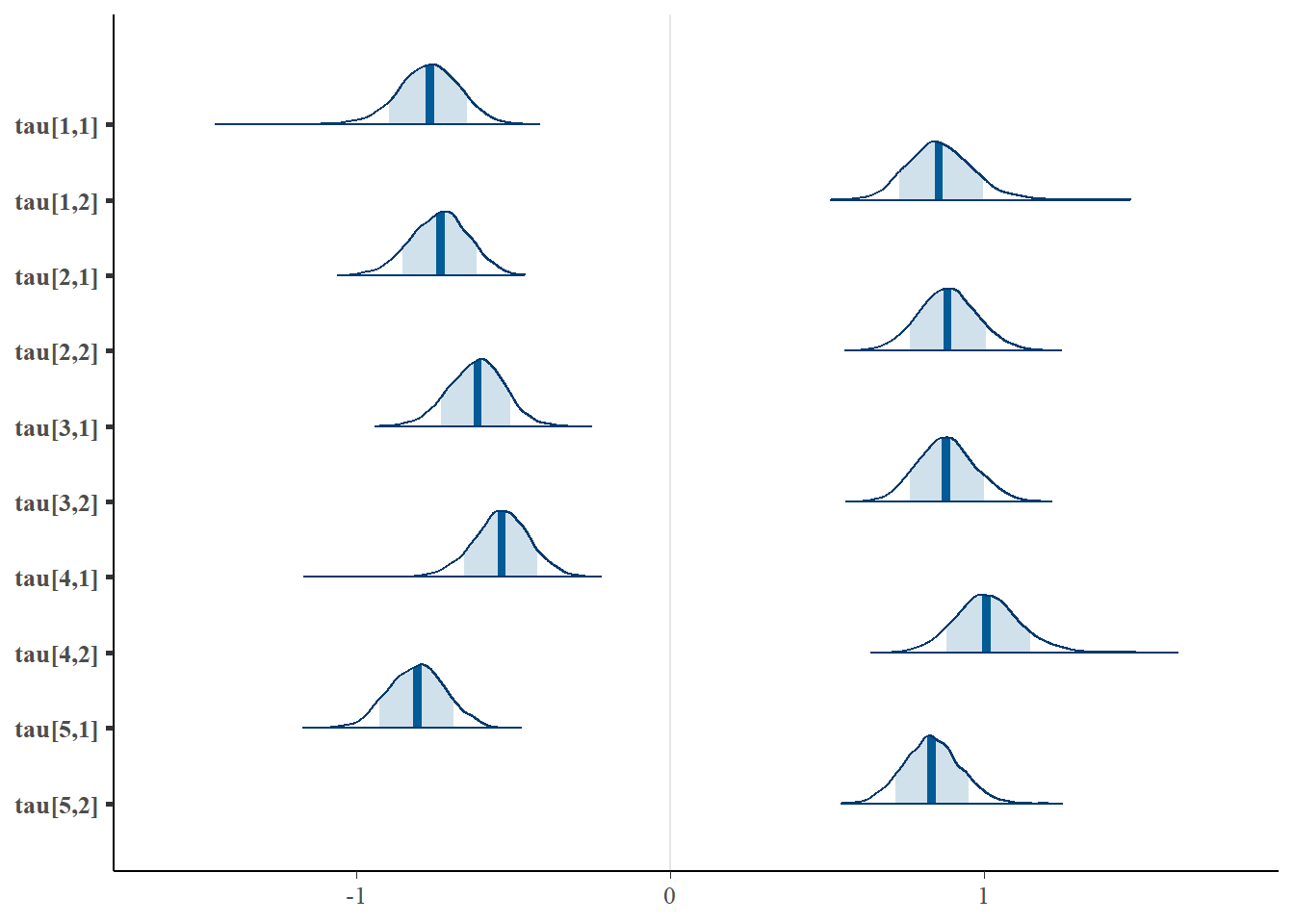

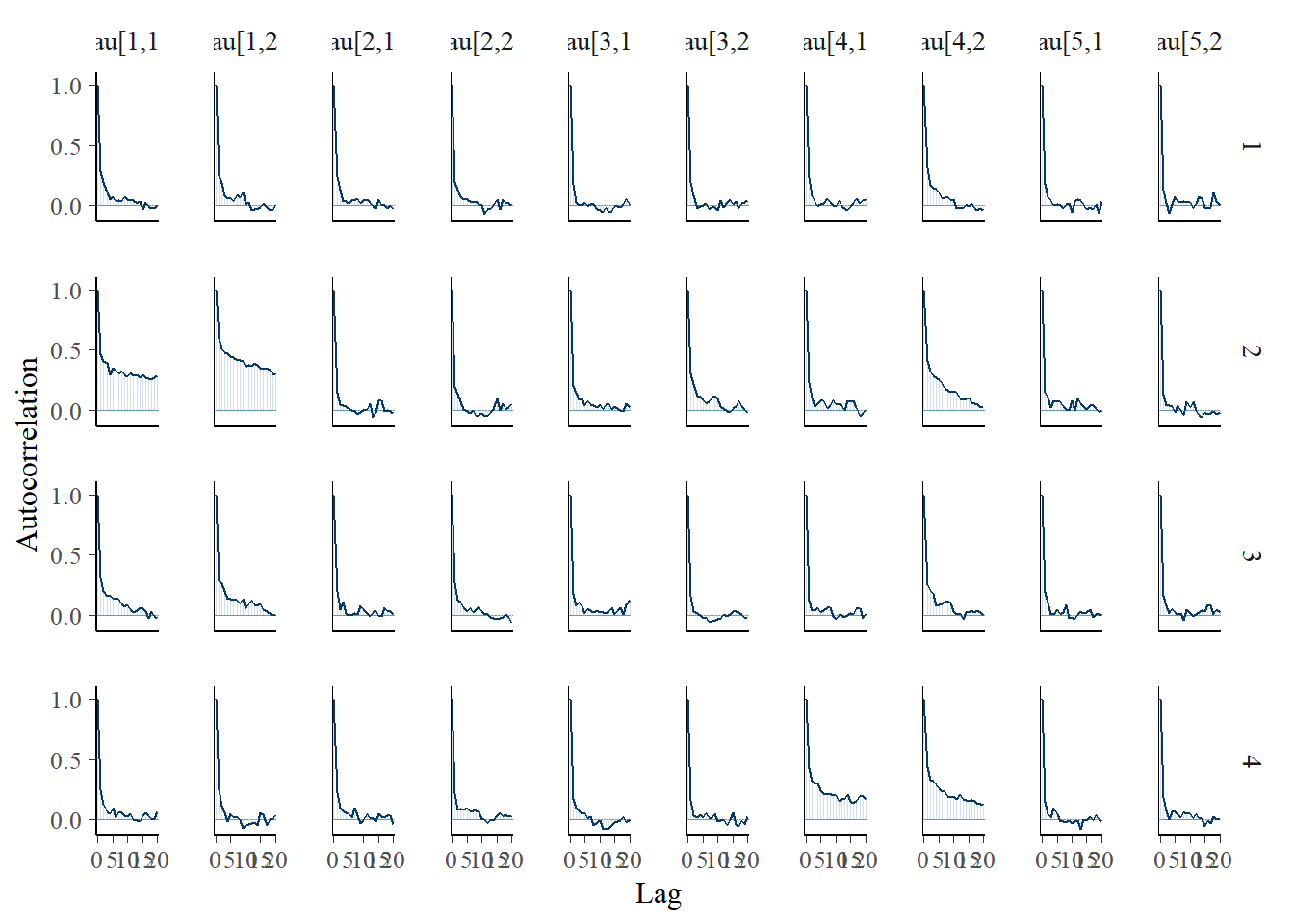

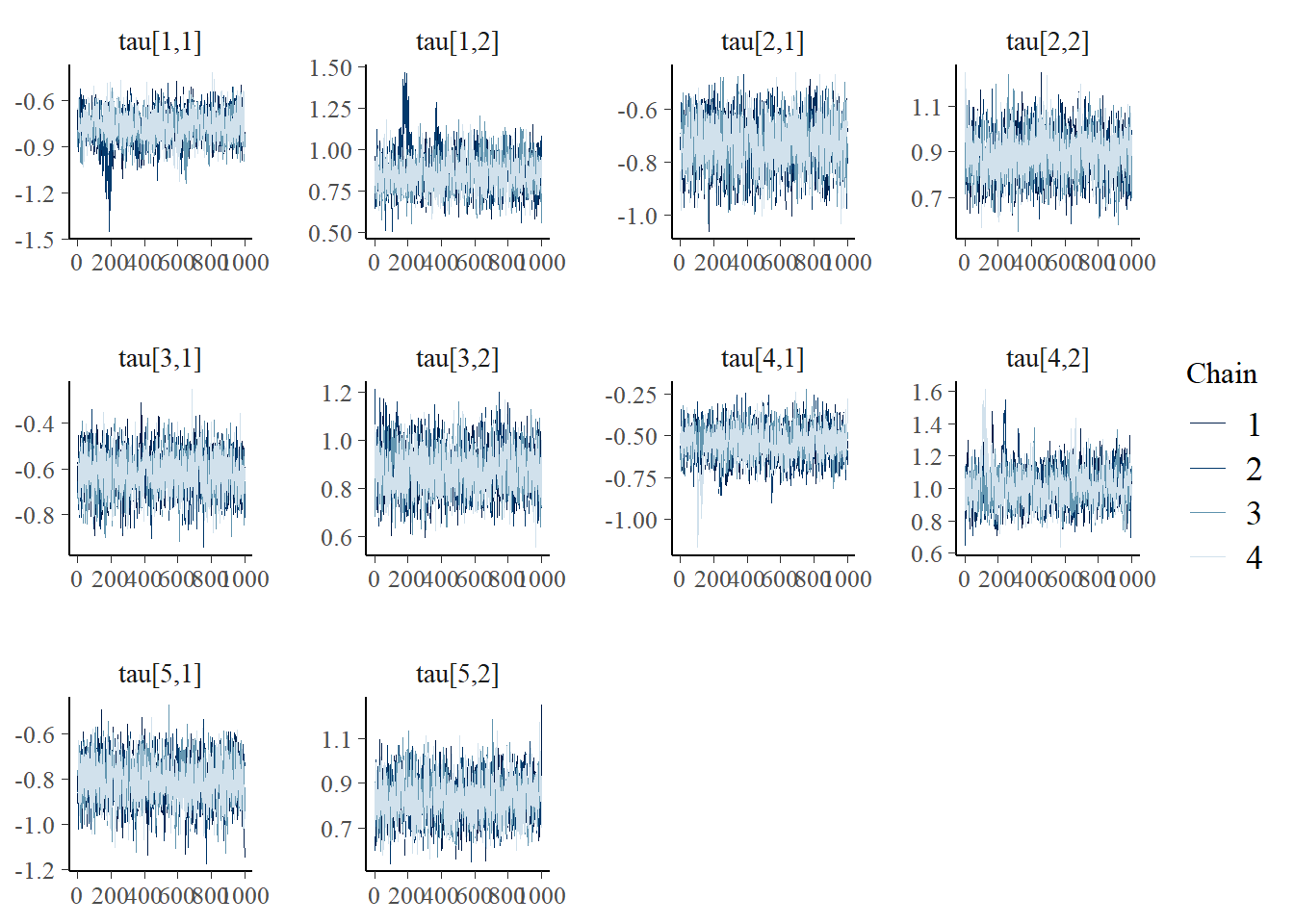

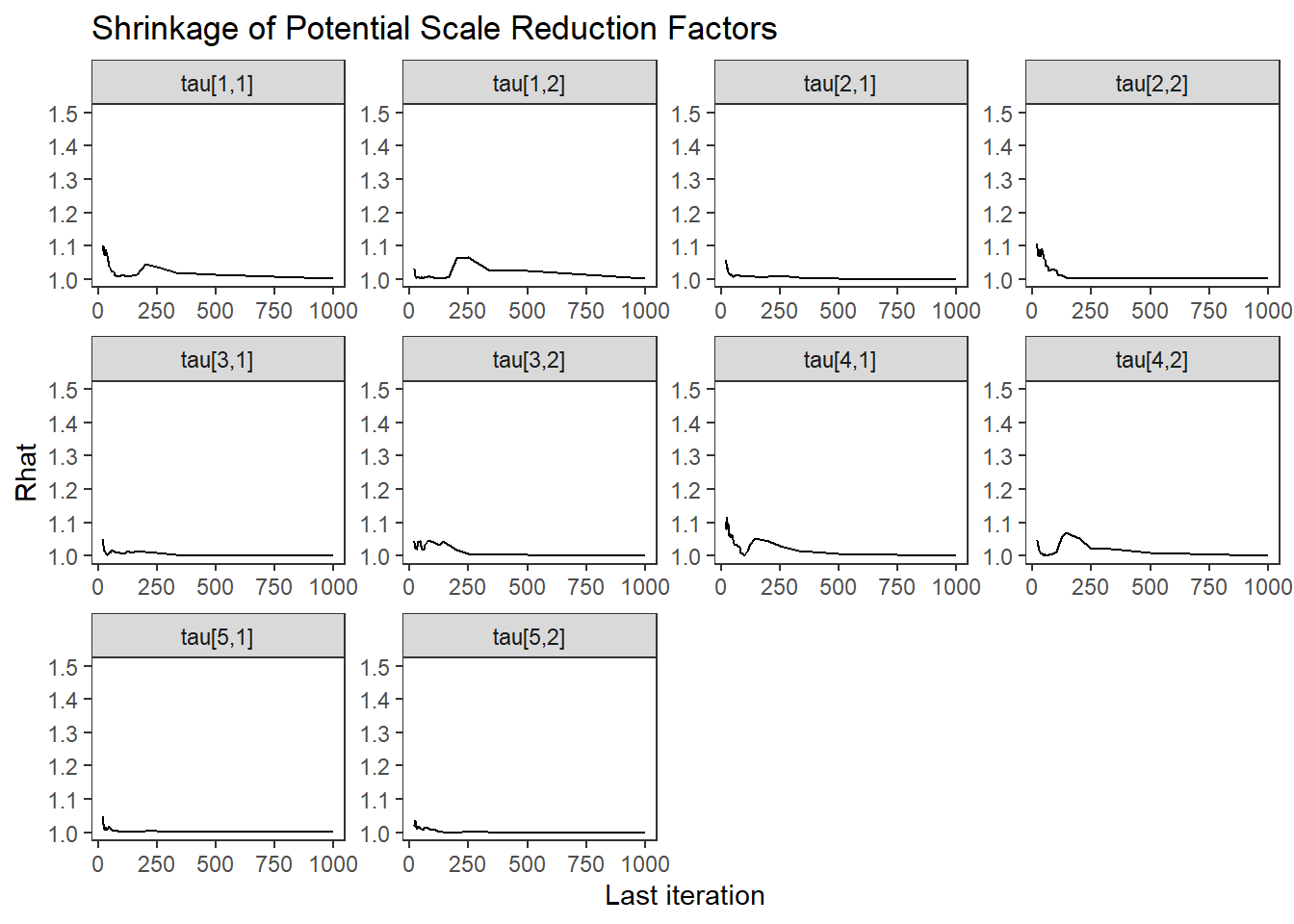

fit.mcmc.ggs <- ggmcmc::ggs(jags.mcmc) # for GRB plotCategroy Thresholds (\(\tau\))

# tau

bayesplot::mcmc_areas(fit.mcmc, regex_pars = "tau", prob = 0.8); ggsave("fig/study1_model1_tau_dens.pdf")

Saving 7 x 5 in imagebayesplot::mcmc_acf(fit.mcmc, regex_pars = "tau"); ggsave("fig/study1_model1_tau_acf.pdf")

Saving 7 x 5 in imagebayesplot::mcmc_trace(fit.mcmc, regex_pars = "tau"); ggsave("fig/study1_model1_tau_trace.pdf")

Saving 7 x 5 in imageggmcmc::ggs_grb(fit.mcmc.ggs, family = "tau") + theme_bw()+theme(panel.grid = element_blank()); ggsave("fig/study1_model1_tau_grb.pdf")

Saving 7 x 5 in imageFactor Loadings (\(\lambda\))

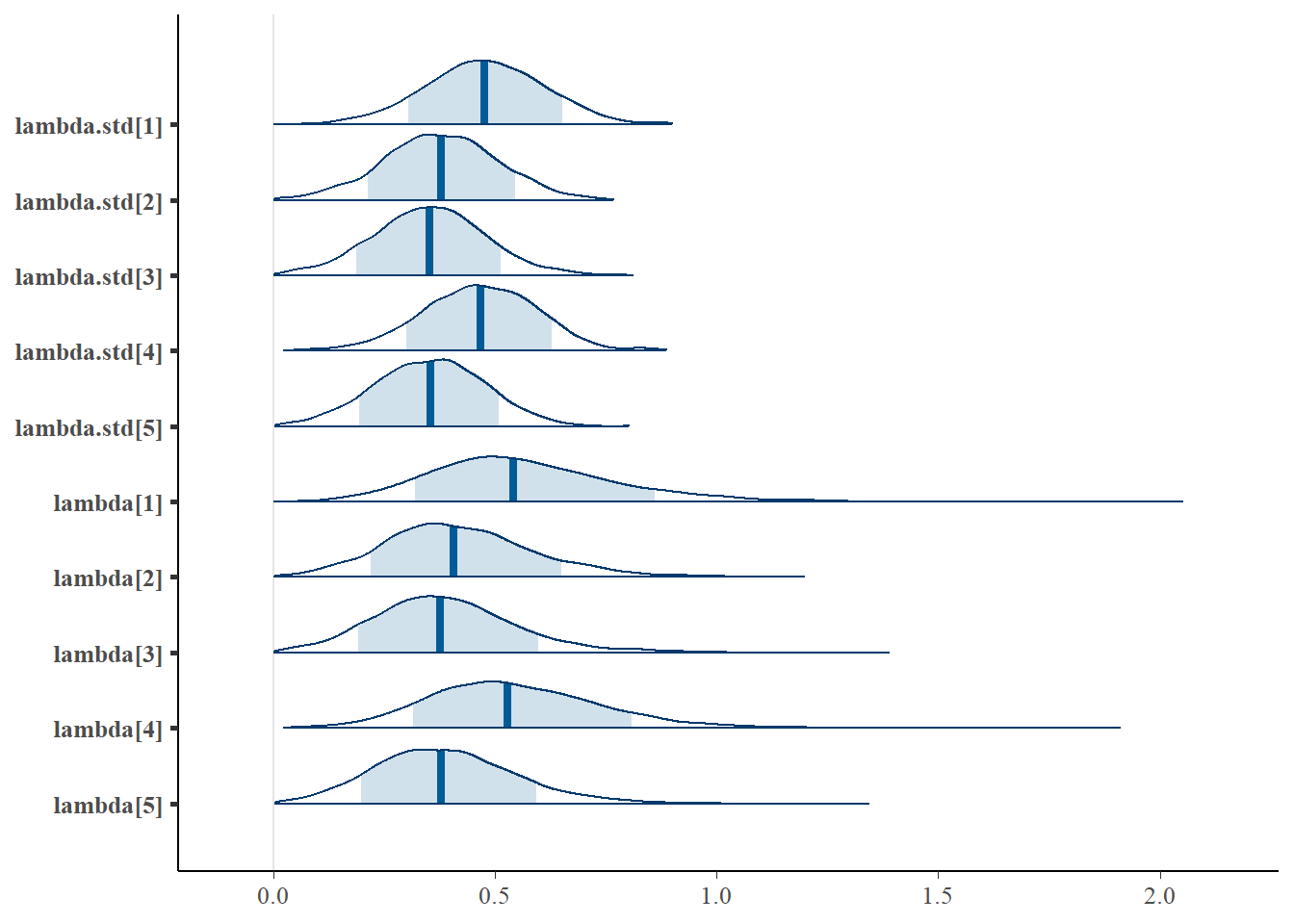

bayesplot::mcmc_areas(fit.mcmc, regex_pars = "lambda", prob = 0.8)

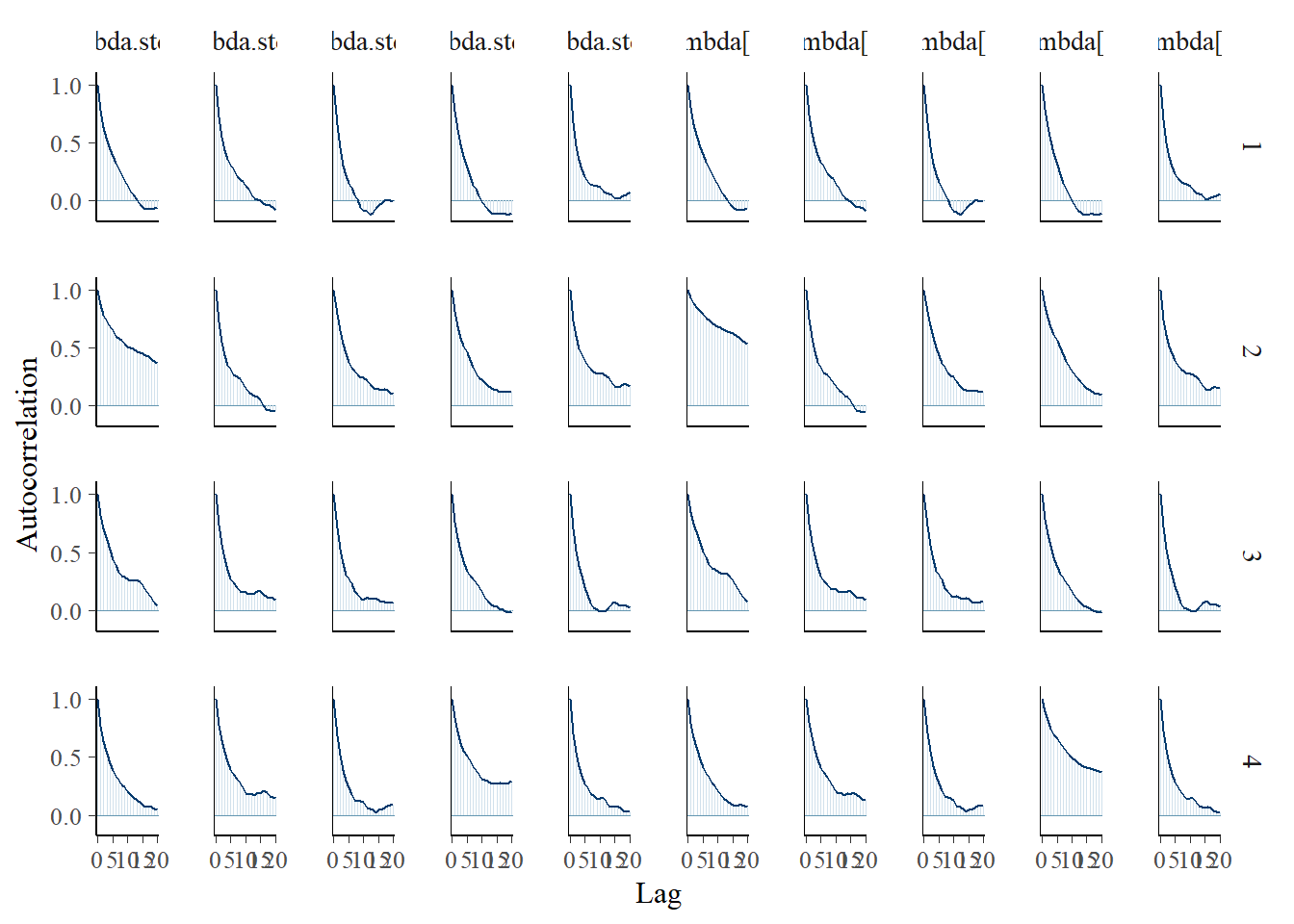

bayesplot::mcmc_acf(fit.mcmc, regex_pars = "lambda")

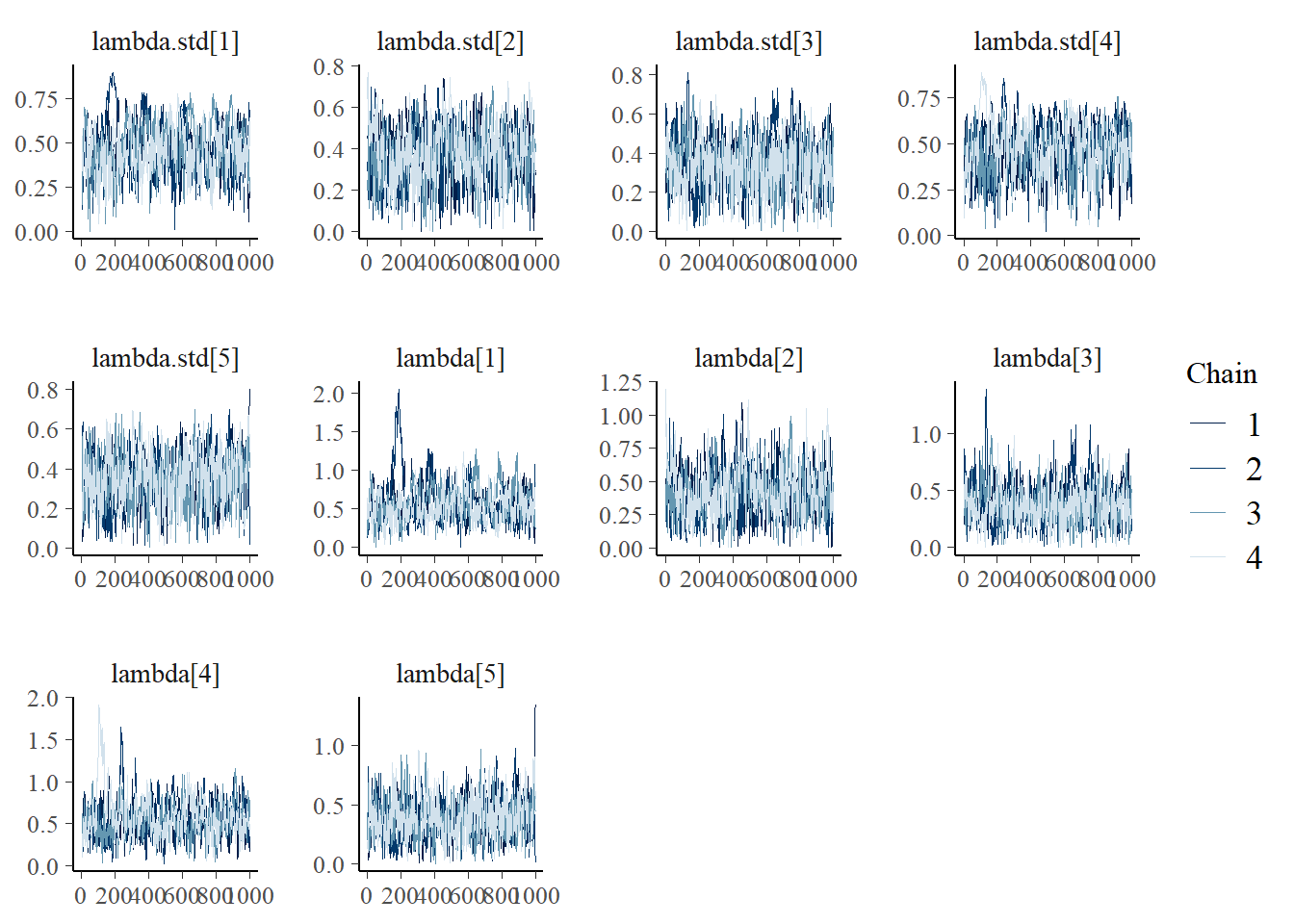

bayesplot::mcmc_trace(fit.mcmc, regex_pars = "lambda")

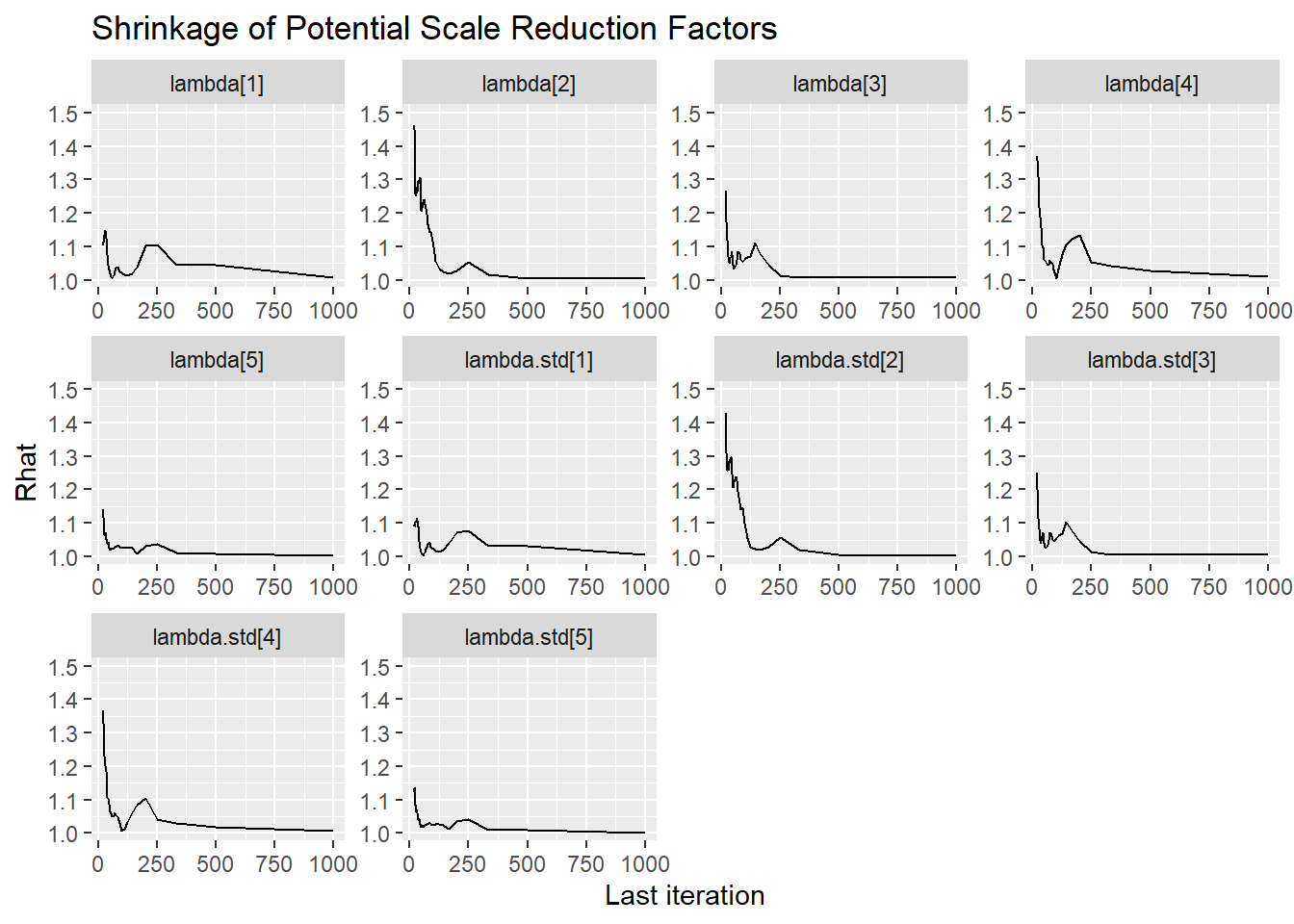

ggmcmc::ggs_grb(fit.mcmc.ggs, family = "lambda")

bayesplot::mcmc_areas(fit.mcmc, regex_pars = "lambda.std", prob = 0.8); ggsave("fig/study1_model1_lambda_dens.pdf")

Saving 7 x 5 in imagebayesplot::mcmc_acf(fit.mcmc, regex_pars = "lambda.std"); ggsave("fig/study1_model1_lambda_acf.pdf")

Saving 7 x 5 in imagebayesplot::mcmc_trace(fit.mcmc, regex_pars = "lambda.std", nrow=1); ggsave("fig/study1_model1_lambda_trace.pdf")Warning: The following arguments were unrecognized and ignored: nrow

Saving 7 x 5 in imageggmcmc::ggs_grb(fit.mcmc.ggs, family = "lambda.std") + theme_bw()+theme(panel.grid = element_blank()); ggsave("fig/study1_model1_lambda_grb.pdf")

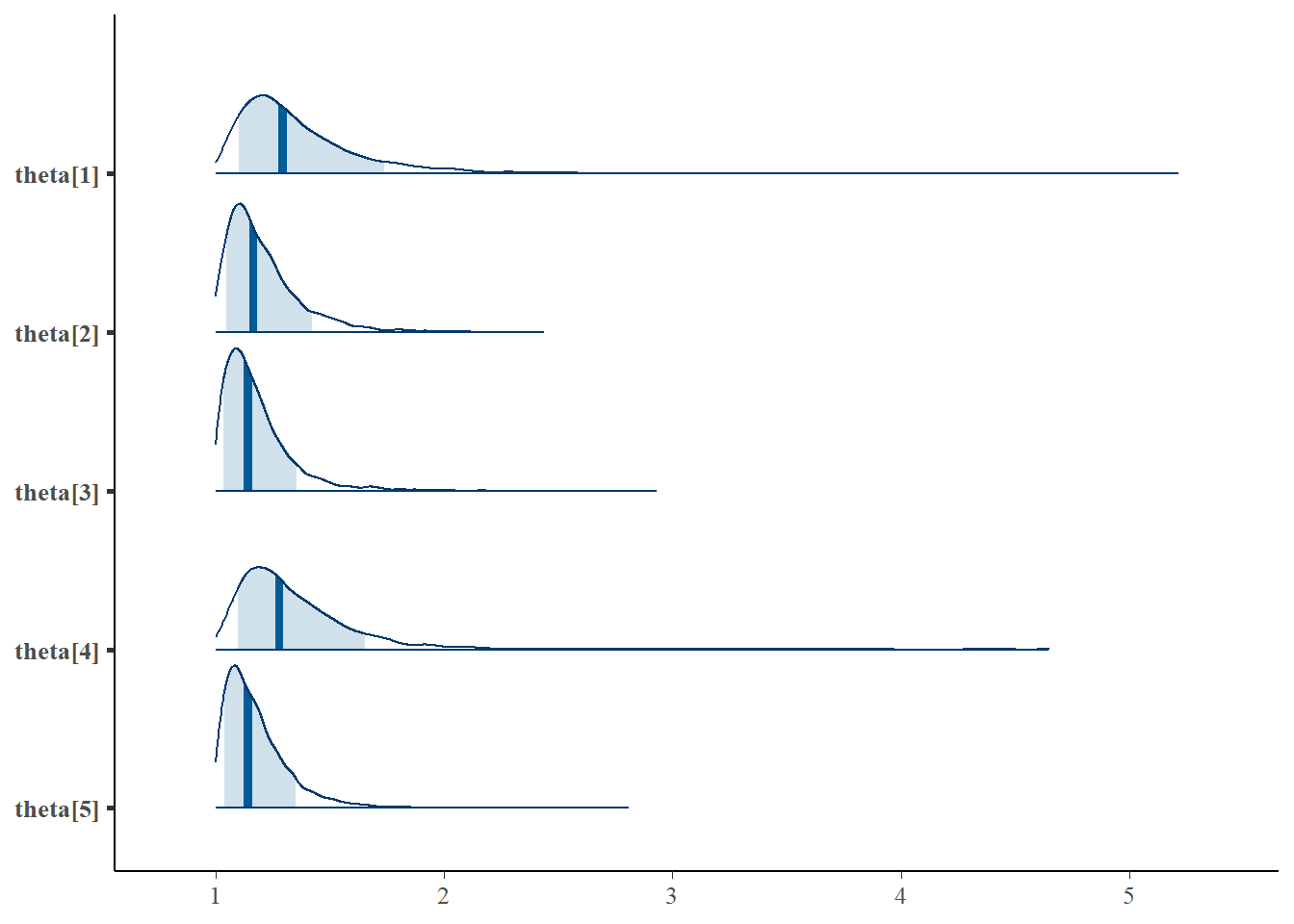

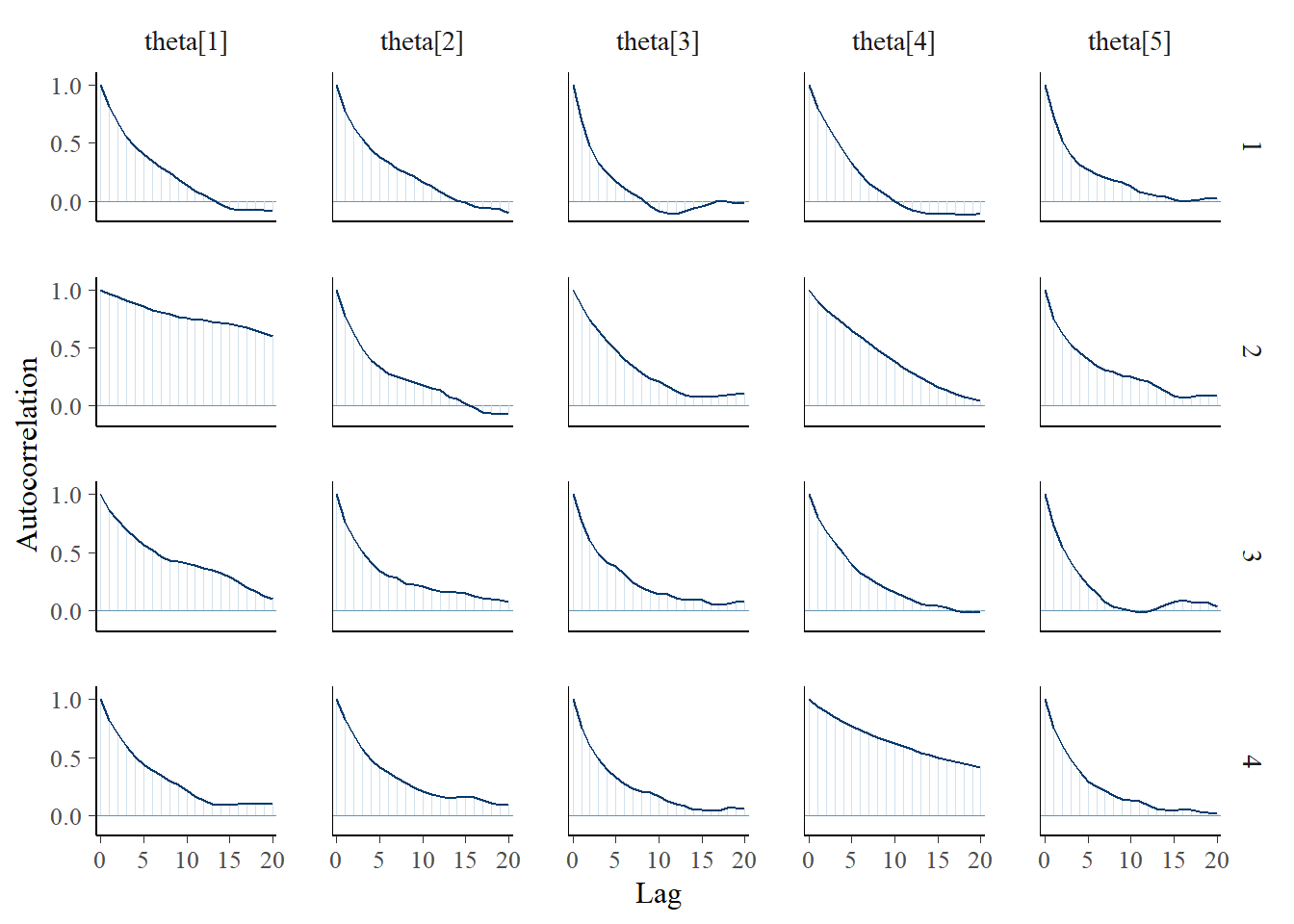

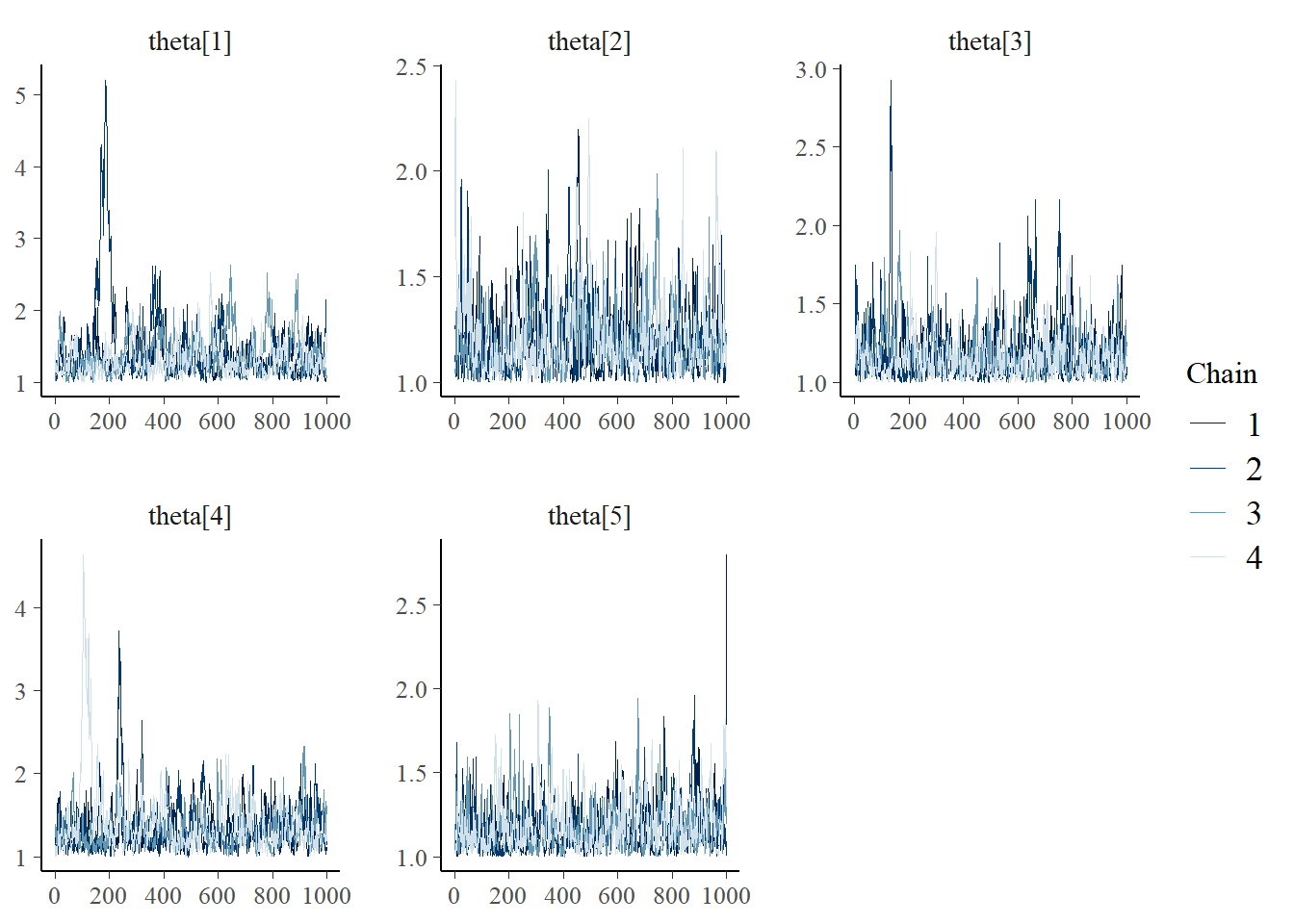

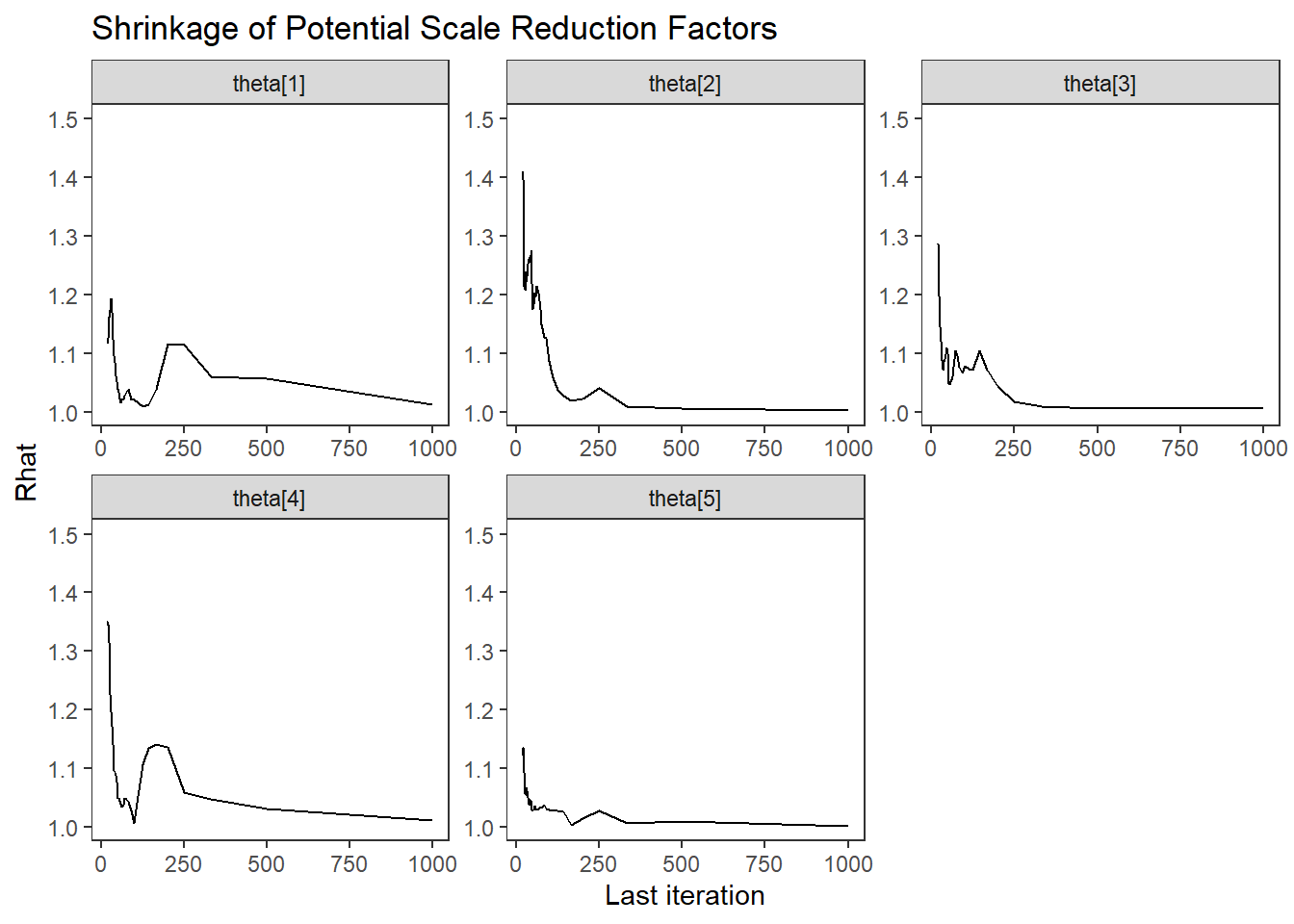

Saving 7 x 5 in imageLatent Response Total Variance (\(\theta\))

bayesplot::mcmc_areas(fit.mcmc, regex_pars = "theta", prob = 0.8); ggsave("fig/study1_model1_theta_dens.pdf")

Saving 7 x 5 in imagebayesplot::mcmc_acf(fit.mcmc, regex_pars = "theta"); ggsave("fig/study1_model1_theta_acf.pdf")

Saving 7 x 5 in imagebayesplot::mcmc_trace(fit.mcmc, regex_pars = "theta"); ggsave("fig/study1_model1_theta_trace.pdf")

Saving 7 x 5 in imageggmcmc::ggs_grb(fit.mcmc.ggs, family = "theta") + theme_bw()+theme(panel.grid = element_blank()); ggsave("fig/study1_model1_theta_grb.pdf")

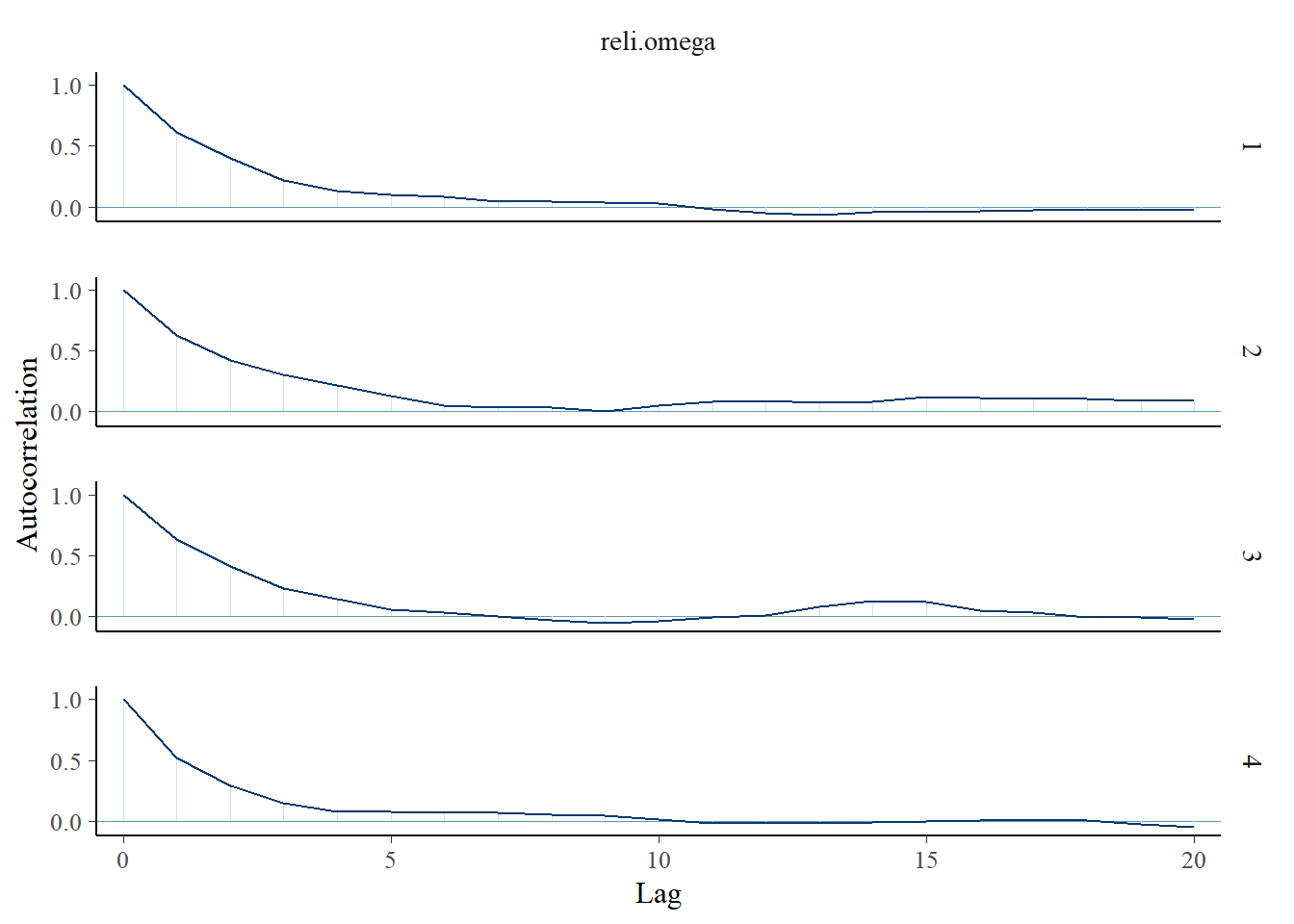

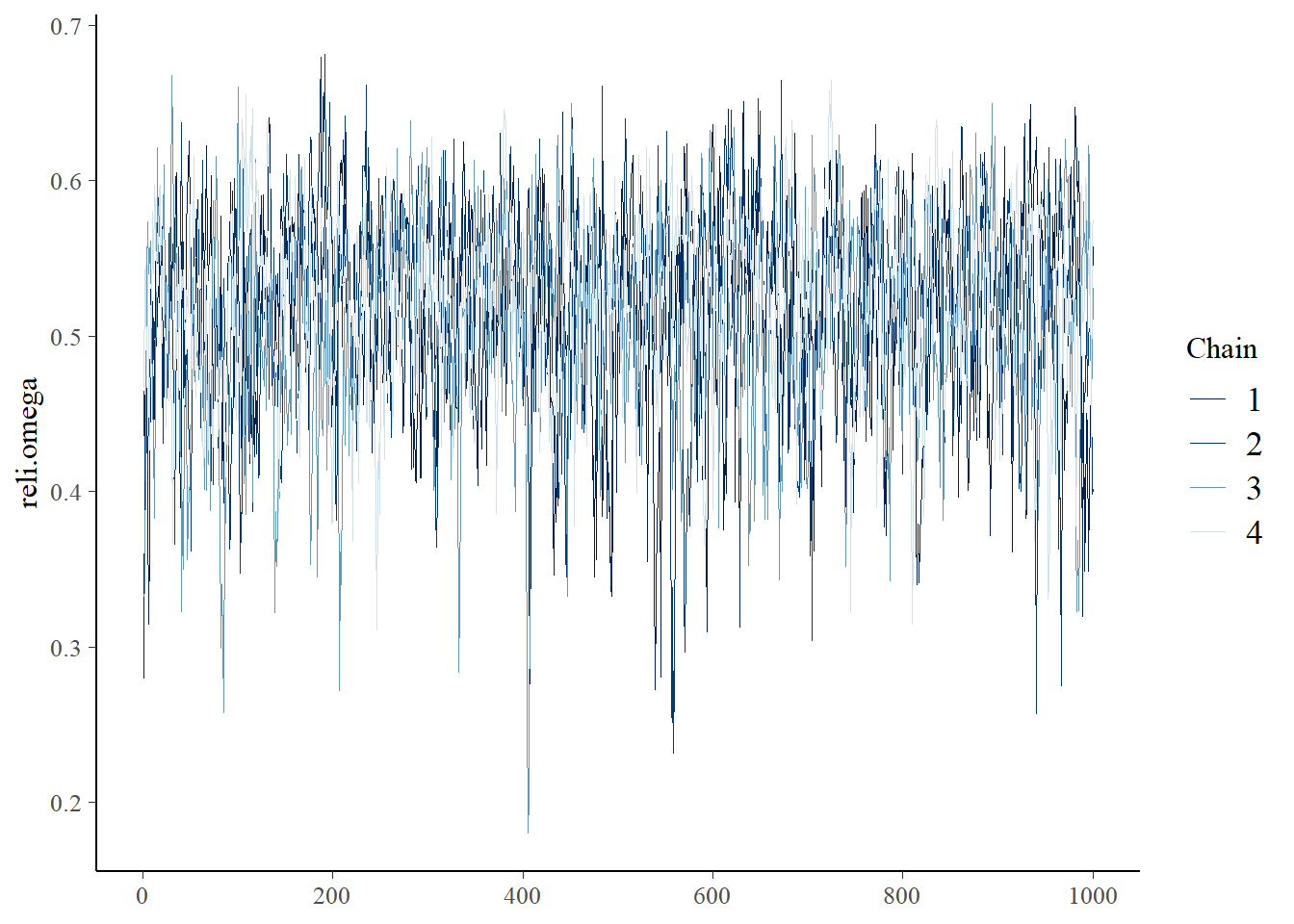

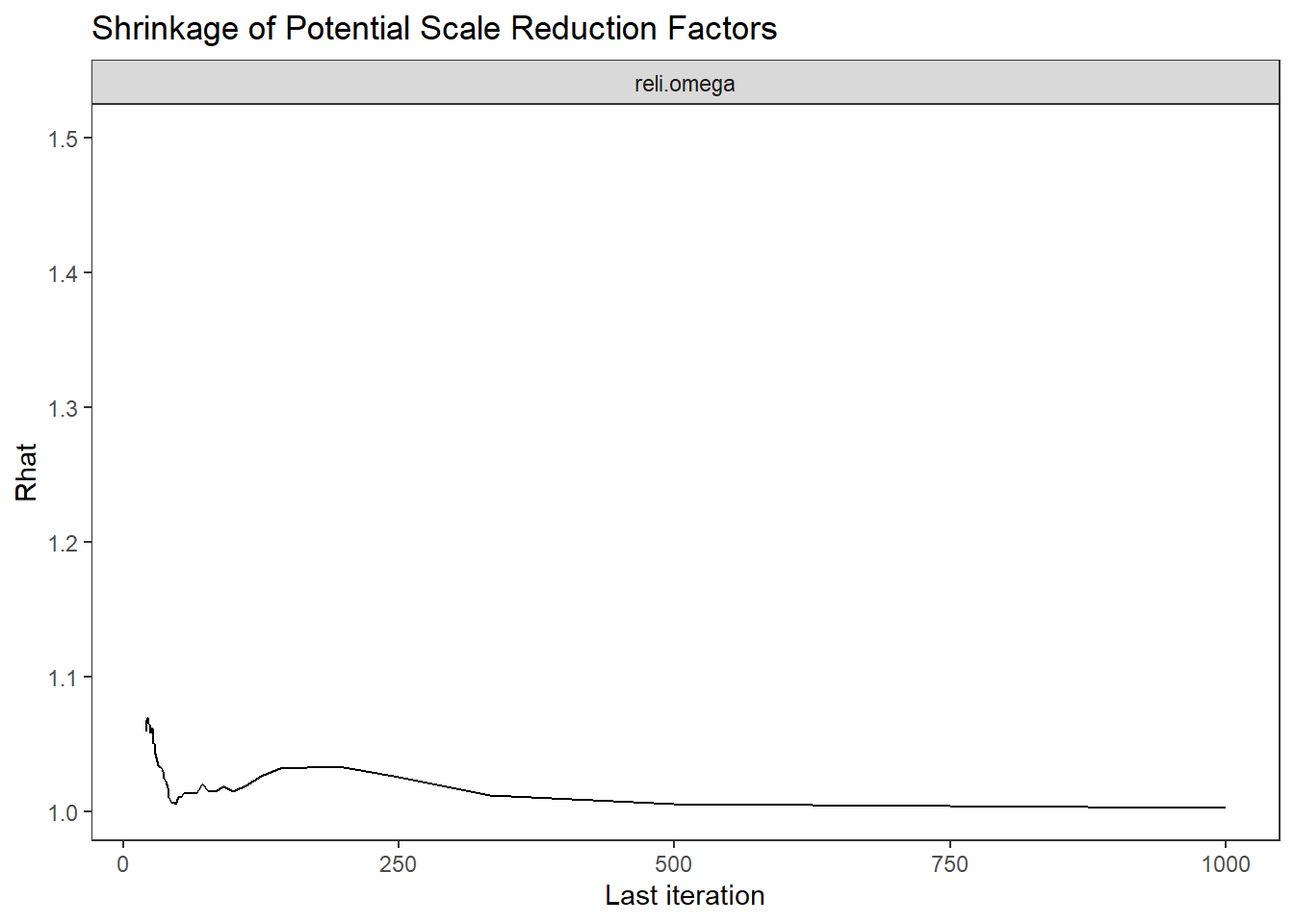

Saving 7 x 5 in imageFactor Reliability Omega (\(\omega\))

bayesplot::mcmc_areas(fit.mcmc, regex_pars = "reli.omega", prob = 0.8); ggsave("fig/study1_model1_omega_dens.pdf")

Saving 7 x 5 in imagebayesplot::mcmc_acf(fit.mcmc, regex_pars = "reli.omega"); ggsave("fig/study1_model1_omega_acf.pdf")

Saving 7 x 5 in imagebayesplot::mcmc_trace(fit.mcmc, regex_pars = "reli.omega"); ggsave("fig/study1_model1_omega_trace.pdf")

Saving 7 x 5 in imageggmcmc::ggs_grb(fit.mcmc.ggs, family = "reli.omega") + theme_bw()+theme(panel.grid = element_blank()); ggsave("fig/study1_model1_omega_grb.pdf")

Saving 7 x 5 in image# extract omega posterior for results comparison

extracted_omega <- data.frame(model_1 = fit.mcmc$reli.omega)

write.csv(x=extracted_omega, file=paste0(getwd(),"/data/study_1/extracted_omega_m1.csv"))Posterior Predictive Distributions

# Posterior Predictive Check

Niter <- 200

model.fit$model$recompile()Compiling model graph

Resolving undeclared variables

Allocating nodes

Graph information:

Observed stochastic nodes: 2500

Unobserved stochastic nodes: 3015

Total graph size: 25550

Initializing modelfit.extra <- rjags::jags.samples(model.fit$model, variable.names = "pi", n.iter = Niter)NOTE: Stopping adaptationN <- model.fit$model$data()[[1]]

nit <- 5

nchain=4

C <- 3

n <- i <- iter <- ppc.row.i <- 1

y.prob.ppc <- array(dim=c(Niter*nchain, nit, C))

for(chain in 1:nchain){

for(iter in 1:Niter){

# initialize simulated y for this iteration

y <- matrix(nrow=N, ncol=nit)

# loop over item

for(i in 1:nit){

# simulated data for item i for each person

for(n in 1:N){

y[n,i] <- sample(1:C, 1, prob = fit.extra$pi[n, i, 1:C, iter, chain])

}

# computer proportion of each response category

for(c in 1:C){

y.prob.ppc[ppc.row.i,i,c] <- sum(y[,i]==c)/N

}

}

# update row of output

ppc.row.i = ppc.row.i + 1

}

}

yppcmat <- matrix(c(y.prob.ppc), ncol=1)

z <- expand.grid(1:(Niter*nchain), 1:nit, 1:C)

yppcmat <- data.frame( iter = z[,1], nit=z[,2], C=z[,3], yppc = yppcmat)

ymat <- model.fit$model$data()[[3]]

y.prob <- matrix(ncol=C, nrow=nit)

for(i in 1:nit){

for(c in 1:C){

y.prob[i,c] <- sum(ymat[,i]==c)/N

}

}

yobsmat <- matrix(c(y.prob), ncol=1)

z <- expand.grid(1:nit, 1:C)

yobsmat <- data.frame(nit=z[,1], C=z[,2], yobs = yobsmat)

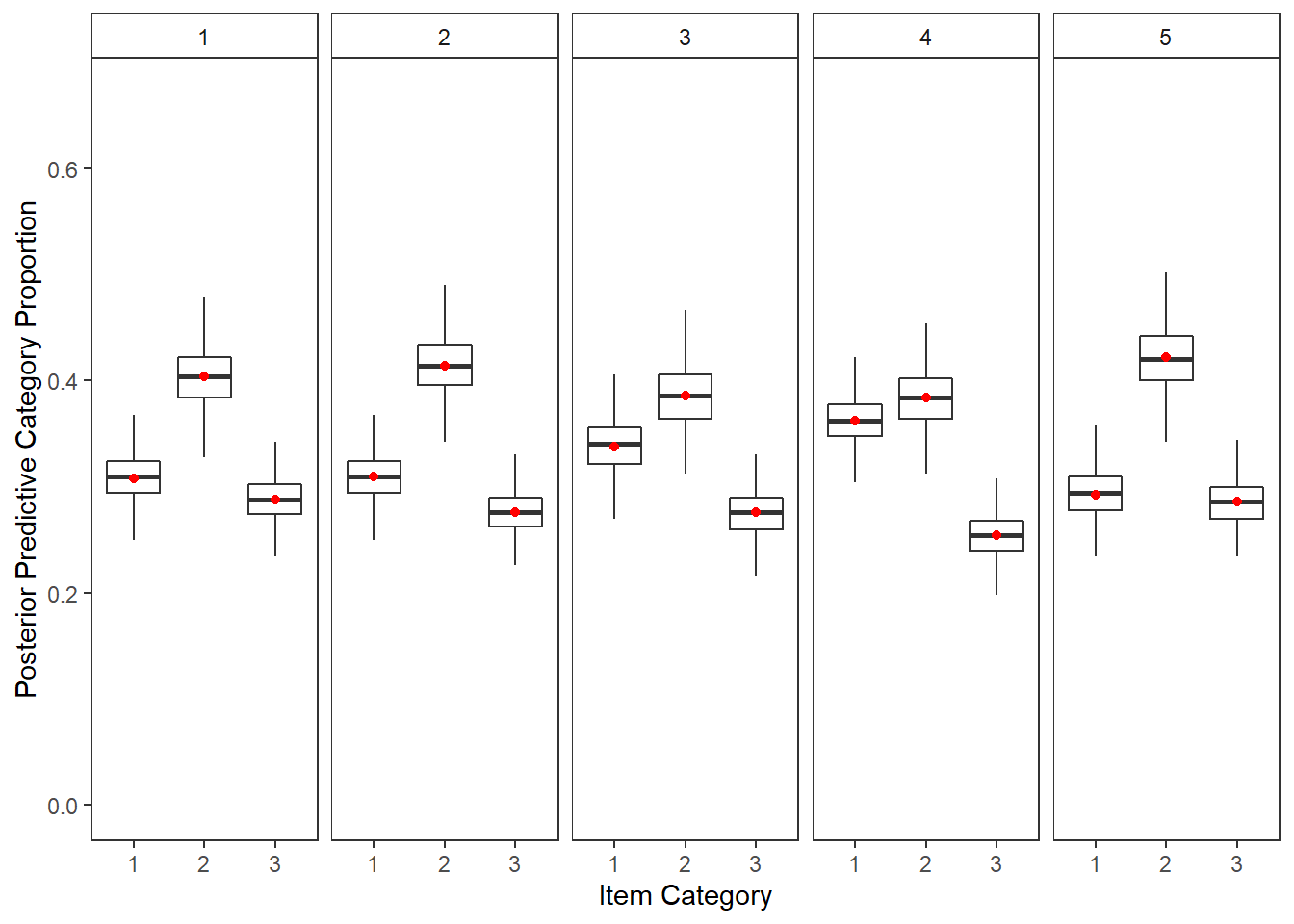

plot.ppc <- full_join(yppcmat, yobsmat)Joining, by = c("nit", "C")p <- plot.ppc %>%

mutate(C = as.factor(C),

item = nit) %>%

ggplot()+

geom_boxplot(aes(x=C,y=y.prob.ppc), outlier.colour = NA)+

geom_point(aes(x=C,y=yobs), color="red")+

lims(y=c(0, 0.67))+

labs(y="Posterior Predictive Category Proportion", x="Item Category")+

facet_wrap(.~nit, nrow=1)+

theme_bw()+

theme(

panel.grid = element_blank(),

strip.background = element_rect(fill="white")

)

p

ggsave(filename = "fig/study1_model1_ppc_y.pdf",plot=p,width = 6, height=4,units="in")

ggsave(filename = "fig/study1_model1_ppc_y.png",plot=p,width = 6, height=4,units="in")

ggsave(filename = "fig/study1_model1_ppc_y.eps",plot=p,width = 6, height=4,units="in")Manuscript Table and Figures

Table

# print to xtable

print(

xtable(

model.fit$BUGSoutput$summary,

caption = c("study1 Model 1 posterior distribution summary")

,align = "lrrrrrrrrr"

),

include.rownames=T,

booktabs=T

)% latex table generated in R 4.0.5 by xtable 1.8-4 package

% Tue Mar 08 18:18:40 2022

\begin{table}[ht]

\centering

\begin{tabular}{lrrrrrrrrr}

\toprule

& mean & sd & 2.5\% & 25\% & 50\% & 75\% & 97.5\% & Rhat & n.eff \\

\midrule

deviance & 3947.90 & 68.60 & 3811.24 & 3902.43 & 3948.08 & 3993.95 & 4080.36 & 1.00 & 760.00 \\

lambda[1] & 0.57 & 0.23 & 0.21 & 0.42 & 0.54 & 0.69 & 1.11 & 1.02 & 500.00 \\

lambda[2] & 0.42 & 0.17 & 0.12 & 0.30 & 0.41 & 0.52 & 0.80 & 1.00 & 760.00 \\

lambda[3] & 0.39 & 0.17 & 0.10 & 0.28 & 0.38 & 0.48 & 0.77 & 1.01 & 400.00 \\

lambda[4] & 0.55 & 0.22 & 0.21 & 0.41 & 0.53 & 0.67 & 1.02 & 1.01 & 360.00 \\

lambda[5] & 0.39 & 0.16 & 0.11 & 0.28 & 0.38 & 0.49 & 0.73 & 1.00 & 750.00 \\

lambda.std[1] & 0.48 & 0.14 & 0.21 & 0.39 & 0.48 & 0.57 & 0.74 & 1.01 & 580.00 \\

lambda.std[2] & 0.38 & 0.13 & 0.12 & 0.29 & 0.38 & 0.46 & 0.63 & 1.00 & 860.00 \\

lambda.std[3] & 0.35 & 0.13 & 0.10 & 0.27 & 0.35 & 0.43 & 0.61 & 1.02 & 410.00 \\

lambda.std[4] & 0.47 & 0.13 & 0.20 & 0.38 & 0.47 & 0.55 & 0.71 & 1.01 & 480.00 \\

lambda.std[5] & 0.35 & 0.12 & 0.11 & 0.27 & 0.35 & 0.44 & 0.59 & 1.01 & 760.00 \\

reli.omega & 0.52 & 0.06 & 0.38 & 0.48 & 0.52 & 0.56 & 0.62 & 1.01 & 500.00 \\

tau[1,1] & -0.77 & 0.10 & -0.98 & -0.83 & -0.77 & -0.70 & -0.58 & 1.01 & 1100.00 \\

tau[2,1] & -0.73 & 0.09 & -0.92 & -0.80 & -0.73 & -0.67 & -0.56 & 1.00 & 2500.00 \\

tau[3,1] & -0.62 & 0.09 & -0.80 & -0.68 & -0.61 & -0.56 & -0.45 & 1.00 & 3900.00 \\

tau[4,1] & -0.54 & 0.09 & -0.73 & -0.60 & -0.54 & -0.48 & -0.36 & 1.01 & 1000.00 \\

tau[5,1] & -0.81 & 0.09 & -0.98 & -0.87 & -0.81 & -0.75 & -0.63 & 1.00 & 1300.00 \\

tau[1,2] & 0.86 & 0.11 & 0.67 & 0.79 & 0.85 & 0.93 & 1.09 & 1.01 & 750.00 \\

tau[2,2] & 0.88 & 0.10 & 0.70 & 0.82 & 0.88 & 0.95 & 1.08 & 1.00 & 850.00 \\

tau[3,2] & 0.88 & 0.09 & 0.71 & 0.82 & 0.88 & 0.94 & 1.06 & 1.00 & 1100.00 \\

tau[4,2] & 1.01 & 0.11 & 0.81 & 0.94 & 1.01 & 1.07 & 1.24 & 1.00 & 1400.00 \\

tau[5,2] & 0.83 & 0.09 & 0.66 & 0.77 & 0.83 & 0.89 & 1.01 & 1.00 & 4000.00 \\

theta[1] & 1.38 & 0.36 & 1.05 & 1.18 & 1.29 & 1.48 & 2.23 & 1.06 & 200.00 \\

theta[2] & 1.21 & 0.17 & 1.01 & 1.09 & 1.17 & 1.27 & 1.65 & 1.01 & 470.00 \\

theta[3] & 1.18 & 0.16 & 1.01 & 1.08 & 1.14 & 1.23 & 1.59 & 1.03 & 250.00 \\

theta[4] & 1.35 & 0.32 & 1.04 & 1.17 & 1.28 & 1.45 & 2.04 & 1.04 & 180.00 \\

theta[5] & 1.18 & 0.14 & 1.01 & 1.08 & 1.14 & 1.24 & 1.54 & 1.00 & 830.00 \\

\bottomrule

\end{tabular}

\caption{study1 Model 1 posterior distribution summary}

\end{table}Figure

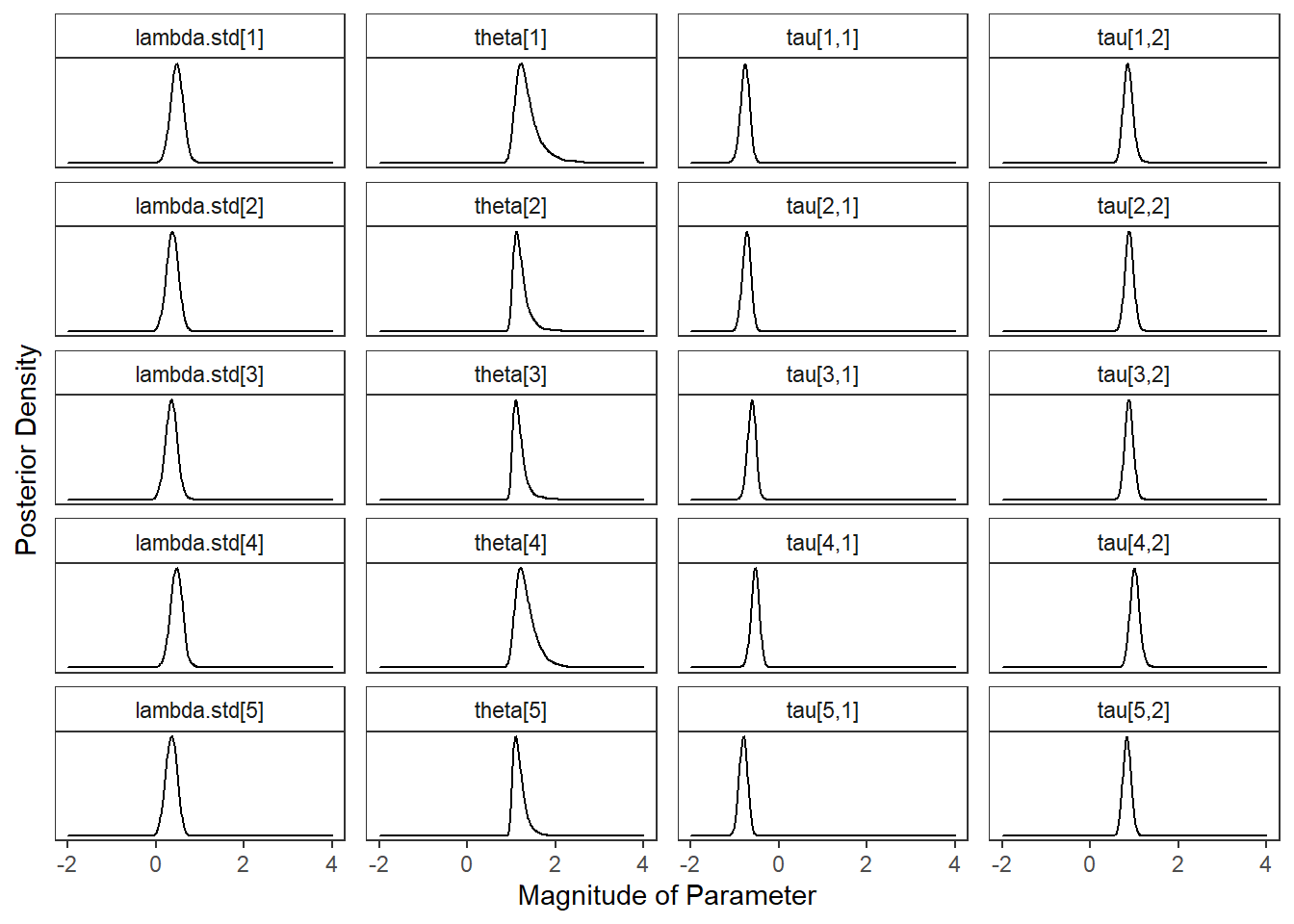

plot.dat <- fit.mcmc %>%

select(!c("iter", "deviance", "reli.omega", paste0("lambda[",1:5,"]")))%>%

pivot_longer(

cols= !c("chain"),

names_to="variable",

values_to="value"

) %>%

mutate(

variable = factor(

variable,

levels = c(

"lambda.std[1]", "theta[1]", "tau[1,1]", "tau[1,2]",

"lambda.std[2]", "theta[2]", "tau[2,1]", "tau[2,2]",

"lambda.std[3]", "theta[3]", "tau[3,1]", "tau[3,2]",

"lambda.std[4]", "theta[4]", "tau[4,1]", "tau[4,2]",

"lambda.std[5]", "theta[5]", "tau[5,1]", "tau[5,2]"

), ordered = T

)

)

p <- ggplot(plot.dat, aes(x=value, group=variable))+

geom_density(adjust=2)+

facet_wrap(variable~., scales="free_y", ncol=4) +

lims(x=c(-2, 4))+

labs(x="Magnitude of Parameter",

y="Posterior Density")+

theme_bw()+

theme(

panel.grid = element_blank(),

strip.background = element_rect(fill="white"),

axis.text.y = element_blank(),

axis.ticks.y = element_blank()

)

pWarning: Removed 20 rows containing non-finite values (stat_density).

ggsave(filename = "fig/study1_model1_posterior_dist.pdf",plot=p,width = 7, height=5,units="in")Warning: Removed 20 rows containing non-finite values (stat_density).ggsave(filename = "fig/study1_model1_posterior_dist.png",plot=p,width = 7, height=5,units="in")Warning: Removed 20 rows containing non-finite values (stat_density).ggsave(filename = "fig/study1_model1_posterior_dist.eps",plot=p,width = 7, height=5,units="in")Warning: Removed 20 rows containing non-finite values (stat_density).

sessionInfo()R version 4.0.5 (2021-03-31)

Platform: x86_64-w64-mingw32/x64 (64-bit)

Running under: Windows 10 x64 (build 22000)

Matrix products: default

locale:

[1] LC_COLLATE=English_United States.1252

[2] LC_CTYPE=English_United States.1252

[3] LC_MONETARY=English_United States.1252

[4] LC_NUMERIC=C

[5] LC_TIME=English_United States.1252

attached base packages:

[1] stats graphics grDevices utils datasets methods base

other attached packages:

[1] car_3.0-10 carData_3.0-4 mvtnorm_1.1-1

[4] LaplacesDemon_16.1.4 runjags_2.2.0-2 lme4_1.1-26

[7] Matrix_1.3-2 sirt_3.9-4 R2jags_0.6-1

[10] rjags_4-12 eRm_1.0-2 diffIRT_1.5

[13] statmod_1.4.35 xtable_1.8-4 kableExtra_1.3.4

[16] lavaan_0.6-10 polycor_0.7-10 bayesplot_1.8.0

[19] ggmcmc_1.5.1.1 coda_0.19-4 data.table_1.14.0

[22] patchwork_1.1.1 forcats_0.5.1 stringr_1.4.0

[25] dplyr_1.0.5 purrr_0.3.4 readr_1.4.0

[28] tidyr_1.1.3 tibble_3.1.0 ggplot2_3.3.5

[31] tidyverse_1.3.0 workflowr_1.6.2

loaded via a namespace (and not attached):

[1] minqa_1.2.4 TAM_3.5-19 colorspace_2.0-0 rio_0.5.26

[5] ellipsis_0.3.1 ggridges_0.5.3 rprojroot_2.0.2 fs_1.5.0

[9] rstudioapi_0.13 farver_2.1.0 fansi_0.4.2 lubridate_1.7.10

[13] xml2_1.3.2 splines_4.0.5 mnormt_2.0.2 knitr_1.31

[17] jsonlite_1.7.2 nloptr_1.2.2.2 broom_0.7.5 dbplyr_2.1.0

[21] compiler_4.0.5 httr_1.4.2 backports_1.2.1 assertthat_0.2.1

[25] cli_2.3.1 later_1.1.0.1 htmltools_0.5.1.1 tools_4.0.5

[29] gtable_0.3.0 glue_1.4.2 reshape2_1.4.4 Rcpp_1.0.7

[33] cellranger_1.1.0 jquerylib_0.1.3 vctrs_0.3.6 svglite_2.0.0

[37] nlme_3.1-152 psych_2.0.12 xfun_0.21 ps_1.6.0

[41] openxlsx_4.2.3 rvest_1.0.0 lifecycle_1.0.0 MASS_7.3-53.1

[45] scales_1.1.1 ragg_1.1.1 hms_1.0.0 promises_1.2.0.1

[49] parallel_4.0.5 RColorBrewer_1.1-2 curl_4.3 yaml_2.2.1

[53] sass_0.3.1 reshape_0.8.8 stringi_1.5.3 highr_0.8

[57] zip_2.1.1 boot_1.3-27 rlang_0.4.10 pkgconfig_2.0.3

[61] systemfonts_1.0.1 evaluate_0.14 lattice_0.20-41 labeling_0.4.2

[65] tidyselect_1.1.0 GGally_2.1.1 plyr_1.8.6 magrittr_2.0.1

[69] R6_2.5.0 generics_0.1.0 DBI_1.1.1 foreign_0.8-81

[73] pillar_1.5.1 haven_2.3.1 withr_2.4.1 abind_1.4-5

[77] modelr_0.1.8 crayon_1.4.1 utf8_1.1.4 tmvnsim_1.0-2

[81] rmarkdown_2.7 grid_4.0.5 readxl_1.3.1 CDM_7.5-15

[85] pbivnorm_0.6.0 git2r_0.28.0 reprex_1.0.0 digest_0.6.27

[89] webshot_0.5.2 httpuv_1.5.5 textshaping_0.3.1 stats4_4.0.5

[93] munsell_0.5.0 viridisLite_0.3.0 bslib_0.2.4 R2WinBUGS_2.1-21